一、基础理论知识

1. 数据

数据(Data),或称数据资源,是指所有能输入到计算机并被计算机程序处理的符号的介质的总称,是用于输入电子计算机进行处理,具有一定意义的数字、字母、符号和模拟量等的通称,是组成信息系统的最基本要素。

2. 大数据

大数据(Big Data)指一种规模大到在获取、存储、管理、分析方面大大超出了传统数据库软件工具能力范围的数据集合,具有海量的数据规模、快速的数据流转、多样的数据类型和价值密度低四大特征。

大数据包括结构化、半结构化和非结构化数据,非结构化数据越来越成为数据的主要部分。大数据技术的不在于掌握庞大的数据信息,而在于对这些含有意义的数据进行专业化处理。换而言之,如果把大数据比作一种产业,那么这种产业实现盈利的关键,在于提高对数据的“加工能力”,通过“加工”实现数据的“增值”。

3. 数据源

数据源(Data Source)是提供某种所需要数据的器件或原始媒体。在数据源中存储了所有建立数据库连接的信息。就像通过指定文件名称可以在文件系统中找到文件一样,通过提供正确的数据源名称,可以找到相应的数据库连接。 数字化转型网(www.szhzxw.cn)

常见的数据源类型有:关系数据库、时序数据库、键值存储数据库、列存储数据库、文档数据库、图形数据库、搜索引擎存储、对象数据库、MPP数据库、大数据库、工具或文件等。

4. 数据仓库

数据仓库(Data Warehouse)是为企业所有级别的决策制定过程,提供所有类型数据支持的数据集合。一般情况下,它是主要职能是数据存储,为了给组织输出分析性报告,为支撑决策的目的而创建的。同时,也可以提供指导业务流程改进,监视和管理数据接入时间、数据成本、数据质量。

由于数据仓库是数据汇总的数据存储空间,一般情况下,会对数据仓库进行分层,常见分层有贴源层(ODS)、数据整合层(EDW)、主题模型层(FDM)、共性计算层/共性加工层(ADM)、应用集市层/数据集市层(ADS)。每种分层组合会根据具体实施情况,完成数据仓库分层设计。

5. 数据中台

数据中台是一套可持续“让企业的数据用起来”的机制,一种战略选择和组织形式,是依据企业特有的业务模式和组织架构,通过有形的产品和实施方法论支撑,构建一套持续不断把数据变成资产并服务于业务的机制。数据中台需要具备数据汇聚整合、数据提纯加工、数据服务可视化、数据价值变现四个核心能力,让组织的员工、客户、伙伴能够方便地应用数据。

数据中台是一种概念、理论,并不是一个独立系统的名称,它是在数据仓库(数据中心)的基础上引申出来的新的概念。职能定位是所有数据的汇聚之所,以及为上层数据应用提供支撑的平台基础,即数据赋能。 数字化转型网(www.szhzxw.cn)

若想全面了解数据中台,区分数据仓库和数据中台的异同,需要从数据来源、建设目标、数据应用三个层面进行说明。

- 在数据来源层面:

数据仓库的数据来源主要是业务数据库,数据格式也是以结构化数据为主。

数据中台的数据来源期望是全域数据包括业务数据、日志数据、埋点数据、爬虫数据、外部数据等。数据格式可以是结构化数据,也可以是非结构化的数据。

- 在建设目标层面:

数据仓库建设主要用来做BI报表,目的性单一,只抽取和清洗该相关分析报表用到基础数据。若新增一张报表,需要从ODS到ADS做一遍数据加工。

建立数据中台的目标是为了融合组织的全部数据,打通数据之间的隔阂,消除数据标准和口径不一致的问题。数据中台通常会对来自多方面的的基础数据进行清洗,按照主题域概念建立多个以事物为主的主题域比如用户主题域,商品主题域,渠道主题域,门店主题域等等。数据中台遵循三个one的概念:One Data, One ID, One Service,基于该理念,数据中台不仅仅是汇聚企业各种数据,而且让这些数据遵循相同的标准和口径,对事物的标识能统一或者相互关联,并且提供统一的数据服务接口,完成数据赋能。

- 在数据应用层面:

数据仓库主要是面向BI报表,数据应用的建设就是传统烟囱式建设,每次都从头再来的开发方式。

数据中台上的数据应用不仅仅只是面向于BI报表,更多面向营销推荐、用户画像、AI决策分析、风险评估、经营分析等。而且这些数据应用,基于数据中台已经汇总、沉淀完毕,能快速为相关系统提供数据,完成快速数据开发工作,同时之前工作成果都能被多个应用共享。

6. 数据管理

数据管理(Data Management)是为实现数据和信息资产价值的获取、控制、保护、交付以及提升,对政策、实践和项目所做的计划、执行和监督。 数字化转型网(www.szhzxw.cn)

一般包含以下三层含义:

(1)数据管理包含一系列业务职能,包括政策、计划、实践和项目的计划和执行;

(2)数据管理包含一套严格的管理规范和过程,用于确保业务职能得到有效履行;

(3)数据管理包含多个由业务领导和技术专家组成的管理团队,负责落实管理规范和过程。

7. 数据治理

国际数据管理协会(DAMA)给出的定义:数据治理是对数据资产管理行使权力和控制的活动集合。

《GBT34960.5-2018 信息技术服务 治理 第5部分》给出的定义:数据资源及其应用过程中相关管控活动、绩效和风险管理的集合。数据治理域包括数据管理体系和数据价值体系。

国际数据治理研究所(DGI)给出的定义:数据治理是一个通过一系列信息相关的过程来实现决策权和职责分工的系统,这些过程按照达成共识的模型来执行,该模型描述了谁(Who)能根据什么信息,在什么时间(When)和情况(Where)下,用什么方法(How),采取什么行动(What)。

另一种解释:

侠义数据治理为了满足内部风险管理和外部监管合规的需要。通过一系列信息相关的过程来实现决策权和职责分工的系统。

广义的数据治理是对数据资产管理行使权力和控制的活动集合(规划、监控和执行),指导其他数据管理职能如何执行,在高层次上执行数据管理制度。组织为实现数据资产价值最大化所开展的一系列持续工作过程,明确数据相关方的责权、协调数据相关方达成数据利益一致、促进数据相关方采取联合数据行动。

最终目标是提升数据的价值,数据治理非常必要,是企业实现数字战略的基础,它是一个管理体系,包括组织、制度、流程、工具。 数字化转型网(www.szhzxw.cn)

再来一种解释:

数据治理(Data Governance)是指将数据作为企业资产而展开的一系列的具体化工作,是对数据的全生命周期管理。

数据治理从词组组成上分数据和治理,治理有改革的意思。既然有改革,那么就需要有相关制度、流程、工具完成对数据的重新梳理、归类,以满足数据的使用要求。

数据治理的目标是提高数据质量(准确性和完整性),保证数据的安全性(保密性、完整性及可用性),实现数据资源在各组织机构部门的共享;推进信息资源的整合、对接、共享和综合应用,从而提升企业管理水平,充分发挥信息化在经营管理中的作用。

数据治理相关制度、流程会引申出数据治理咨询,如《数据治理组织架构及人才管理方案》、《数据治理实施路径》、《数据应用场景实施路径》、《元数据管理办法及流程》、《数据标准管理办法及流程》、《数据质量问题分析及整改方案》、《未来N年数据治理发展规划》等;数据治理工具会引申出相关管理系统,如元数据管理系统、数据安全系统、数据标准系统、数据质量系统等,一般偏向基于数据治理咨询成果完成当期数据治理实施与落地。 数字化转型网(www.szhzxw.cn)

8. 数据资产

数据资产(Data Asset)是指由企业拥有或者控制的,能够为企业带来未来经济利益的,以物理或电子的方式记录的数据资源,如文件资料、电子数据等。在企业中,并非所有的数据都构成数据资产,数据资产是能够为企业产生价值的数据资源。

《GBT34960.5-2018 信息技术服务 治理 第5部分》给出的定义:组织拥有和控制的、能够产生效益的数据资源。

二、数据治理相关理论知识

1. 数据模型

数据模型(Data Model),经常简称为模型,是现实世界数据特征的抽象,用于描述一组数据的概念和定义。数据模型从抽象层次上描述了数据的静态特征、动态行为和约束条件。数据模型所描述的内容有三部分:数据结构、数据操作(其中ER图数据模型中无数据操作)和数据约束,形成数据结构的基本蓝图,也是企业数据资产的战略地图。数据模型按不同的应用层次分成主题域数据模型、概念数据模型、逻辑数据模型、物理数据模型四种类型。

主题域数据模型:简称主题域模型,是最高视角的规划蓝图,是在较高层次上将企业信息系统中的数据综合、归类,并进行分析利用的抽象。一般情况下主题域模型按业务、系统、部门等划分。

概念数据模型:简称概念模型,是一种面向用户、面向客观世界的模型,主要用来描述现实世界的概念化结构,与具体的数据库管理系统(DBMS,Database Management System)无关,一般只有实体集,联系集的分析结构。 数字化转型网(www.szhzxw.cn)

逻辑数据模型:简称逻辑模型,是一种以概念模型为基础,根据业务条线、业务事项、业务流程、业务场景的需要,设计的面向业务实现的数据模型,一般包括具体的功能和处理信息。逻辑模型是面向DBMS的模型,用于指导在不同的DBMS系统中实现。逻辑数据模型常见形式有网状数据模型、层次数据模型等。

物理数据模型:简称物理模型,是一种面向计算机物理表示的模型,描述了数据在储存介质上的组织结构。物理模型的设计应基于逻辑模型的成果,以保证实现业务需求。它不但与具体的DBMS有关,而且还与操作系统和硬件有关,因此,在设计模型时需要考虑系统性能的相关要求。

2. 元模型&元数据

元模型(Meta Model)是关于模型的模型,是描述某一模型的规范,具体来说就是组成模型的元素和元素之间的关系。元模型是相对与模型的概念,离开了模型元模型就没有了意义。

元数据(Metadata),又称中介数据、中继数据,为描述数据的数据(data about data),主要是描述数据属性(property)的信息,用来支持如指示存储位置、历史数据、资源查找、文件记录等功能。元数据是关于数据的组织、数据域及其关系的信息,简言之,元数据就是关于数据的数据。元数据按用途不同分为技术元数据、业务元数据、操作元数据、管理元数据。

数据模型、元模型、元数据之间的关系:模型是数据特征的抽象,是组建元模型的理论基础。元模型是元数据的模型,是存储元数据的数据模型,由于元数据的多样性,因此不同类型及子类对应的元模型也不尽相同,需要根据具体的元数据进行设计。 数字化转型网(www.szhzxw.cn)

- 技术元数据

技术元数据(Technical Metadata):描述数据系统中技术领域相关概念、关系和规则的数据;包括数据平台内对象和数据结构的定义、源数据到目的数据的映射、数据转换的描述等。

技术元数据如果细分,还可以分为结构性技术元数据和关联性技术元数据。

结构性技术元数据:结构性技术元数据提供了在信息技术的基础架构中对数据的说明,如数据的存放位置、数据的存储类型、数据的血缘关系等。

关联性技术元数据:描述了数据之间的关联和数据在信息技术环境之中的流转情况。技术元数据的范围主要包括:技术规则(计算/统计/转换/汇总)、数据质量规则技术描述、字段、衍生字段、事实/维度、统计指标、表/视图/文件/接口、报表/多维分析、数据库/视图组/文件组/接口组、源代码/程序、系统、软件、硬件等。

在实践中,技术元数据的采集的内容会根据不同数据库做具体内容的调整,如关系数据库常见的表、字段、存储过程、函数、视图,键值存储数据库就诶有视图、存储过程这种概念。

- 业务元数据

描述数据系统中业务领域相关概念、关系和规则的数据;包括业务术语、信息分类、指标、统计口径等。从另一个维度来说,业务元数据是数据仓库环境的关键元数据,是用户访问时了解业务数据的途径,内容来源包括多个方面:用例建模(Case Modeling)工具、控制数据库、数据库目录和数据抽取/转换/加载的工具。 数字化转型网(www.szhzxw.cn)

在实践中,常见的数据指标、数据元素(数据元)、数据标签、报表表头等都属于业务元数据。

- 操作元数据

与元数据管理相关的组织、岗位、职责、流程,以及系统日常运行产生的操作数据。操作元数据管理的内容主要包括:与元数据管理相关的组织、岗位、职责、流程、项目、版本,以及系统生产运行中的操作记录,如运行记录、应用程序、运行作业。

简单理解,操作元数据是描述数据处理过程的数据。

在实践中,一般操作元数据主要存储的数据是:数据ETL信息、数据加工处理策略数据信息、数据处理调度信息、数据处理异常信息等。

- 管理元数据

描述了数据的管理属性,包括管理部门、管理责任人等,通过明确管理属性,有利于数据管理责任到部门和个人,是数据安全管理的基础。常见的管理元数据包括:数据所有者、数据质量定责、数据安全等级等。 数字化转型网(www.szhzxw.cn)

简单理解,管理元数据是描述数据管理归属的数据。

在实践中,一般管理元数据主要存储的数据是:数据归属信息(业务归属、系统归属、运维归属、数据权限归属)、各个数据库里面创建的用户访问库\表\视图\存储过程等的权限信息(含数据安全信息)等。

元模型怎么基于不同业务场景、数据库类型定义,技术元数据、业务元数据、操作元数据如何采集,等后续编制《元数据管理系统建设》文章时具体陈述。

3. 数据标准

数据标准(Data Standards)是指保障数据的内外部使用和交换的一致性和准确性的规范性约束。在数字化过程中,数据是业务活动在信息系统中的真实反映。由于业务对象在信息系统中以数据的形式存在,数据标准相关管理活动均需以业务为基础,并以标准的形式规范业务对象在各信息系统中的统一定义和应用,以提升企业在业务协同、监管合规、数据共享开放、数据分析应用等各方面的能力。

数据标准是一个从业务、技术、管理三方面达成一致的规范化体系,同时也是是建立一套符合自身实际,涵盖定义、操作、应用多层次数据的标准化体系。它包括基础类标准和指标类标准。

- 基础类数据标准

基础类数据标准是为了统一组织所有业务活动相关数据的一致性和准确性,解决业务间数据一致性和数据整合,按照数据标准管理过程制定的数据标解决业务间数据一致性和数据整合,按照数据标准管理过程制定的数据标准。

基础类数据标准主要的内容,包括数据元、代码集、数据集、编码规则。

数据元( Data Element),也称为数据元素,是用一组属性描述其定义、标识、表示和允许值的数据单元,在一定语境下,通常用于构建一个语义正确、独立且无歧义的特定概念语义的信息单元。数据元可以理解为数据的基本单元,将若干具有相关性的数据元按一定的次序组成一个整体结构即为数据模型。对应的是数据元标准。

代码集是用于说明信息基本数据集中数据元素的分类编码。代码基于某一个代码集的分类编码下的可排序数据集合,一般情况下代码是无序的对象集合,包含唯一值CODE,和对应的值VALUE。为了扩展性,体现树状代码模式,还会有父类CODE。由于代码一词在业务人员理解中会产生开发代码的概念,有时候会将代码集改成编码集,对应的是编码标准。 数字化转型网(www.szhzxw.cn)

- 指标类数据标准

指标类数据标准一般分为基础指标标准和计算指标(又称组合指标)。基础指标具有特定业务和经济含义有特定业务和经济含义,且仅能通过基础类数据加工获得,计算指标通常由两个以上基础指标计算得出。

4. 数据质量

数据质量(Data Quality)是保证数据应用效果的基础,是描述数据价值含量的指标。

衡量数据质量的指标体系有很多,典型的指标有:完整性(数据是否缺失)、规范性(数据是否按照要求的规则存储)、一致性(数据的值是否存在信息含义上的冲突)、准确性(数据是否错误)、唯一性(数据是否是重复的)、时效性(数据是否按照时间的要求进行上传)。

通常从技术方面、业务方面、管理方面寻找数据质量问题。

- 技术方面

在技术方面,一般从数据库表设计、数据生产、数据采集、数据传输、数据装载、数据存储整个数据生命周期的各个环点寻找数据质量问题。

数据库表设计环节:在业务系统建设时对表结构、字段约束、数据校验规则的设计不合理,造成数据录入无校验或校验不当,引起数据重复、不准确、不完整等。

数据生产环节:指业务系统产生生产数据,在业务系统中未控制数据写入权限、对数据收集页面未做数据校验、对数据重复提交未做限制、数据之间的逻辑未做控制等引发数据重复、不准确、不一致等。各个业务系统通用或者依赖数据未做统一的管理,各业务系统各自为政,烟囱式建设系统,导致系统之间的数据不一致。

数据采集环节:数通过API、DB Link等方式获取数据,在采集点、采集频率、采集内容、映射关系、采集参数和流程设置的不合理,导致的数据采集效率低下、采集失败、数据丢失、数据映射与转换失败等问题。 数字化转型网(www.szhzxw.cn)

数据传输环节:网络不可控、数据传输过程中未加密,造成数据传输环节数据被篡改、丢失引发的数据质量问题。

数据加工环节:指通过ETL、数据开发等方式,在编制数据清洗规则、数据转换规则、数据装载规则时,未做合理的限制、验证等方式,造成数据重复、映射错误等问题。

数据存储环节:数据存储区设置不合理、人为在数据存储上调整数据,引发数据丢失、无效、失真、重复等问题。

- 业务方面

在业务方面,由于需求不清晰、需求频繁变更、数据输入格式不规范、数据造假造成数据质量问题。

需求不清晰:业务规则、业务流程、业务采集信息项不清晰,影响设计环节构建的数据模型不合理,进而引发数据生产环节数据质量问题。 数字化转型网(www.szhzxw.cn)

需求频繁变更:一般也是由于需求不清晰导致需求变更频繁,影响数据在技术层面所有环节,在频繁变更的情况下,稍有疏忽或者设计不合理或者数据迁移逻辑错误,导致数据质量问题频繁发生,且不好治理。

数据输入格式不规范:一般主要针对大范围内容数据的输入场景,由于输入内容的大小写、全半角、特殊字符未留心注意,造成数据失真、数据丢失等问题。

数据造假:操作人员为了提高或降低考核指标,亦或是快速完成相关数据收集工作,对一些数据在录入时进行了处理,使得数据真实性无法满足质量要求。

- 管理方面

在管理方面,主要是对数据质量认知薄弱,没有或者未履行数据质量制度,数据认责、数据考核机制匮乏,导致数据管理方面缺失引发的数据质量问题。

数据质量认知:没有认识到数据质量的重要性,关注系统建设缺少对数据生产的关注,认为系统是万能的,数据质量差些也没关系。

数据质量制度:数据质量问题从输入、发现、指派、处理、优化没有一个统一的流程和制度支撑,造成数据生产时数据不规范、数据丢失、数据冲突等问题,接下来的数据发现、指标、处理、优化也没有控制和管理,出现数据问题也没有相应的数据认责、考核机制做到行为约束,导致整个数据质量问题没有形成闭环。 数字化转型网(www.szhzxw.cn)

影响数据质量也可以从客观因素和主观因素分析。在数据各环节流转中,由于系统异常和流程设置不当等客观因素,引起的数据质量问题。在数据各环节处理中,由于人员数据意识低和管理缺陷等主观因素,造成操作不当而引起的数据质量问题。

5. 数据交换

数据交换(Data Switching)在基于数据中台、数据仓库、数据治理场景下,不是指基于多个数据终端设备(DTE)之间,为任意两个终端设备建立数据通信临时互连通路的过程;而是指将分散建设的若干应用信息系统中的数据进行整合,使若干个应用子系统进行信息/数据的传输及共享,提高信息资源的利用率,成为进行信息化建设的基本目标,保证分布异构系统之间互联互通。

简单理解,当前的数据交换主要将应用系统产生的数据,通过数据卸数、数据装数完成异构数据库(源)之间的互联互通。常见的数据交换模式有库到库、库到文件、文件到库、文件到文件。

6. 数据服务

数据服务(Data Service)是将全企业级的数据提供服务能力,通过服务化包装,以服务接口的方式对业务系统提供数据。 数字化转型网(www.szhzxw.cn)

数据服务除了将原来散布各处的数据服务整合,实现数据服务的统一对接及出口,也可以支持基于数据服务配置数据API,通过统一接入统一管理的方式,实现全企业级数据服务的发布、申请、对接调用、鉴权、监控、限流管控,从而实现数据服务的统一管控。

数据服务是从系统应用层面为数据使用方提供安全、统一的数据。

7. 数据生命周期

任何事物都具有一定的生命周期,数据也不例外。数据生命周期(Data Life Cycle)是从数据的产生、加工、使用乃至消亡,基于有一个科学的管理办法,将极少或者不再使用的数据从系统中剥离出来,并通过核实的存储设备进行保留,不仅能够提高系统的运行效率,更好的服务客户,还能大幅度减少因为数据长期保存带来的储存成本。

数据生命周期一般包含在线阶段、归档阶段(有时还会进一步划分为在线归档阶段和离线归档阶段)、销毁阶段三大阶段,管理内容包括建立合理的数据类别,针对不同类别的数据制定各个阶段的保留时间、存储介质、清理规则和方式、注意事项等。

8. 数据开发

数据开发(Data Development)指围绕数据全生命周期打造全流程统一标准化的工具能力,对数据模型设计、数据加工处理程序开发、测试、上线等进行统一管理的活动。一般情况下,数据开发包含离线开发和实时开发。

离线开发,又叫做离线数据开发,指通过编制数据加工表达式处理昨天或者更久前的数据,时间单位通常是天、小时。

实时开发,又叫做实时数据开发,处理即时收到数据,时效主要取决于传输和存储速度,时间单位通常是秒、毫秒。 数字化转型网(www.szhzxw.cn)

9. 数据安全

数据安全(Data Security)为数据处理系统建立和采用的技术和管理的安全保护,保护计算机硬件、软件和数据不因偶然和恶意的原因遭到破坏、更改和泄露。由此计算机网络的安全可以理解为:通过采用各种技术和管理措施,使网络系统正常运行,从而确保网络数据的可用性、完整性和保密性。

数据分类目录,又称数据目录,指根据组织数据的属性或特征,将其按照一定的原则和方法进行区分和归类,并建立起一定的分类体系和排列顺序,以便更好地管理和使用组织数据的过程。

数据目录是数据保护工作中的一个关键部分,是建立统一、准确、完善的数据架构的基础,是实现集中化、专业化、标准化数据管理的基础,也是数据资产盘点重要的依赖数据。

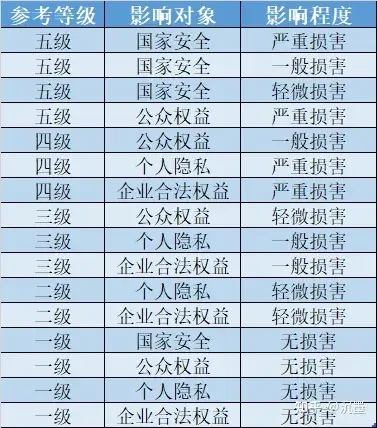

数据分级,又称敏感等级,是指在数据分类的基础上,采用规范、明确的方法区分数据的重要性和敏感度差异,按照一定的分级原则对其进行定级,从而为组织数据的开放和共享安全策略制定提供支撑的过程。

静态脱敏,是将数据抽取进行脱敏处理后,下发至测试库,脱敏后的数据与生产环境隔离,满足业务需要的同时保障生产数据库的安全。静态脱敏是不可逆的动作,可以概括为数据的“搬移并仿真替换”。

动态脱敏,是基于脱敏规则,对敏感数据的查询和调用结果进行实时脱敏,确保返回数据可用性和安全性。动态脱敏可以概括为“边脱敏,边使用”。

三、数据资产相关理论知识

1. 业务数据

业务数据(Business Data)是业务处理过程中或事物处理所产生的数据,也称交易数据。业务数据生成主要有三种情况:一、业务交易过程中产生的数据,例如:计划单、销售单、生产单、采购单等,这部分数据多数人为产生;二、系统产生的数据,包括,硬件运行状况、软件运行状况、资源消耗状况、应用使用状况、接口调用状况、服务健康状况等;三、自动化设备所产生的数据,IOT物联网的各类设备运行数据、生产采集数据等等。不论来源何处,这里数据有一个共同的特点就是时效性强、响应高、数据量大。

2. 主数据

主数据(Master Data)是指用来描述企业核心业务实体的数据,是企业核心业务对象、交易业务的执行主体。是在整个价值链上被重复、共享应用于多个业务流程的、跨越各个业务部门和系统的、高价值的基础数据,是各业务应用和各系统之间进行数据交互的基础。从业务角度,主数据是相对“固定”的,变化缓慢。主数据是企业信息系统的神经中枢,是业务运行和决策分析的基础。例如客户、企业组织机构和员工、产品、渠道、科目等。 数字化转型网(www.szhzxw.cn)

3. 数据价值

数据价值(Data Value)是对数据内在价值的度量,可以从数据成本和数据应用价值两方面来开展。数据成本一般包括采集、存储和计算的费用(人工费用、IT设备等直接费用和间接费用等)和运维费用(业务操作费、技术操作费等)。数据应用价值主要从数据的分类、使用频次、使用对象、使用效果和共享流通等方面计量。

4. 资产目录

数据资产目录(Data Asset Catalog),简称资产目录,是指对数据中有价值、可用于分析和应用的数据进行提炼形成的目录体系。编制数据资产目录主要是建立业务场景和数据资源的关联关系,降低理解系统数据的门槛。

四、相关关系

1. 数据管理&数据治理&数据资产的关系

数据管理包含数据治理,“治理是整体数据管理的一部分”这个概念目前已经得到了业界的广泛认同。数据管理包含多个不同的领域,其中一个最显著的领域就是数据治理。数据资产是在数据治理的基础上,核心是如何实现数据价值,体现数据价值,完成数据赋能。数据管理、数据治理、数据资产管理三者关系如图所示。 数字化转型网(www.szhzxw.cn)

2. 数据治理框架

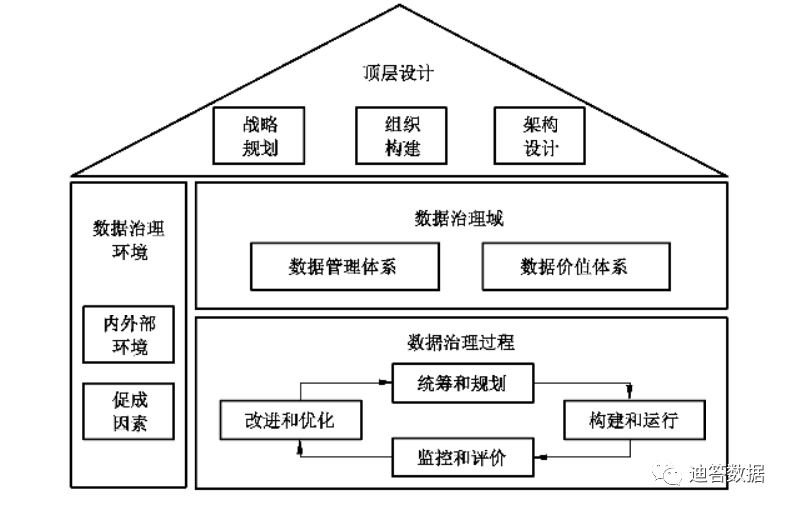

GB/T34960《信息技术服务治理》第5部分提到,数据治理框架包含顶层设计、数据治理环境、数据治理域和数据治理过程四大部分。

顶层设计包含数据相关的战略规划、组织构建和架构设计,是数据治理实施的基础。数据治理环境包含内外部环境及促成因素,是数据治理实施的保障。数据治理域包含数据管理体系和数据价值体系,是数据治理实施的对象。数据治理过程包含统筹和规划、构建和运行、监控和评价以及改进和优化,是数据治理实施的方法。

在数据治理域中,数据管理体系主要组织应围绕数据标准、数据质量、数据安全、元数据管理和数据生存周期等,开展数据管理体系的治理,至少包括:a) 评估数据管理的现状和能力,分析和评估数据管理的成熟度;b) 指导数据管理体系治理方案的实施,满足数据战略和管理要求;c) 监督数据管理的绩效和符合性,并持续改进和优化。 数字化转型网(www.szhzxw.cn)

数据价值体系主要组织应围绕数据流通、数据服务和数据洞察等,开展数据资产运营和应用的治理,至少包括:a) 评估数据资产的运营和应用能力,支撑数据价值转化和实现;b) 指导数据价值体系治理方案的实施,满足数据资产的运营和应用要求;c) 监督数据价值实现的绩效和符合性,并持续改进和优化。

3. 数据治理&数据资产&数据的关系

从数据层面来看,数据体系包括治理、管理和应用三个部分。治理是负责解决人与人、人与数据之间的事,管理负责各个职能领域,应用则是数据价值的实现。根据这三个维度,数据治理重点在治理,一般包含数据治理咨询和数据治理实施,是数据在治理与管理的结合;数据资产偏重的是资产,一般重点体现数据的价值和数据的应用,基于数据资产盘点及价值分析,展示数据资产的价值和提供数据应用。

或者说,数据治理是在高层次上执行数据管理制度,对数据行使权力和控制的活动集合(规划、监控和执行),数据资产重点是发现数据价值,通过提供数据应用的能力助力企业发展、提升企业运营能力。

数据是企业信息化的原料,数据治理是企业信息化的基石,数据资产基于数据治理的数据,挖掘数据价值,通过数据运营、数据分析的手段,为企业赋能,助力企业信息化的腾飞。

翻译:

This paper explains the basic concepts of data governance and data assets related theoretical knowledge

Basic theoretical knowledge

- Data

Data, or data resources, refers to the general term for all the symbols that can be input into the computer and processed by the computer program. It is a general term for numbers, letters, symbols and analog quantities that have a certain meaning and are used to input electronic computers for processing. It is the most basic element of an information system. 数字化转型网(www.szhzxw.cn)

- Big data

Big Data refers to a data set with a large scale that greatly exceeds the capability range of traditional database software tools in terms of acquisition, storage, management and analysis. It has four characteristics: massive data scale, rapid data flow, diverse data types and low value density.

Big data includes structured, semi-structured, and unstructured data, with unstructured data increasingly becoming a major part of the data. Big data technology is not about mastering huge data information, but about specialized processing of these meaningful data. In other words, if the big data is compared to an industry, then the key to achieving profitability in this industry is to improve the “processing capacity” of the data, and realize the “value-added” of the data through “processing”.

- Data Sources

A Data Source is a device or raw medium that provides some kind of desired data. All information to establish a database connection is stored in the data source. Just as you can find a file in the file system by specifying a file name, you can find the corresponding database connection by providing the correct data source name. 数字化转型网(www.szhzxw.cn)

Common data source types are relational database, temporal database, key-value storage database, column storage database, document database, graph database, search engine storage, object database, MPP database, large database, tool or file, etc.

- Data warehouse

A Data Warehouse is a collection of data that supports all types of data at all levels of the enterprise’s decision making process. In general, it is the primary function of data storage, in order to output analytical reports to the organization, for the purpose of supporting decision making. At the same time, it can also provide guidance for business process improvement, monitoring and managing data access time, data cost, and data quality.

Since the data warehouse is a data storage space for data summary, under normal conditions, the data warehouse will be layered, common layers are paste source layer (ODS), data integration layer (EDW), topic model layer (FDM), common computing layer/common processing layer (ADM), application market layer/data market layer (ADS). Each layered combination will complete the hierarchical design of the data warehouse according to the specific implementation.

- Data center

Data center is a set of sustainable “let the enterprise data use” mechanism, a strategic choice and organizational form, is based on the enterprise’s unique business model and organizational structure, through tangible products and implementation methodology support, to build a set of continuous data into assets and serve the business mechanism. The data center needs to have four core capabilities of data aggregation and integration, data purification and processing, data service visualization, and data value realization, so that employees, customers, and partners of the organization can easily apply data.

Data center is a concept, theory, and not the name of an independent system, it is a new concept derived from the data warehouse (data center). Functional positioning is the place where all data is gathered, and the platform foundation that provides support for upper-level data applications, that is, data empowerment. 数字化转型网(www.szhzxw.cn)

If you want to fully understand the data center and distinguish the similarities and differences between the data warehouse and the data center, it is necessary to explain from three levels: data source, construction goal and data application.

At the data source level:

The data source of data warehouse is mainly business database, and the data format is mainly structured data.

The data source of the data center is expected to be global data including business data, log data, buried data, crawler data, external data, etc. The data format can be structured or unstructured.

At the level of construction objectives:

The construction of data warehouse is mainly used to make BI reports, with a single purpose, and only extract and clean the basic data of the relevant analysis reports. If a new report is added, data processing needs to be done from ODS to ADS. 数字化转型网(www.szhzxw.cn)

The goal of establishing a data center is to integrate all the data of the organization, open up the gap between the data, and eliminate the problem of inconsistent data standards and caliber. The data center usually cleans the basic data from various aspects, and establishes a number of theme-based theme domains according to the concept of theme domains, such as user theme domains, commodity theme domains, channel theme domains, store theme domains, and so on. The data center follows three one concepts: One Data, One ID, One Service. Based on this concept, the data center not only gathers various data of the enterprise, but also makes these data follow the same standard and caliber, and can unify or interrelate the identification of things, and provide a unified data service interface to complete data empowerment.

At the data application level:

The data warehouse is mainly oriented to BI reports, and the construction of data application is the traditional chimney construction and the development way of starting from scratch every time.

The data application on the data platform is not only for BI reports, but also for marketing recommendation, user portrait, AI decision analysis, risk assessment, business analysis, etc. And these data applications, based on the data center has been summarized and precipitated, can quickly provide data for related systems, complete rapid data development work, and previous work results can be shared by multiple applications. 数字化转型网(www.szhzxw.cn)

- Data management

Data Management is the planning, implementation, and oversight of policies, practices, and programs to capture, control, protect, deliver, and enhance the value of data and information assets.

It generally contains the following three meanings:

Data management encompasses a range of business functions, including the planning and execution of policies, programs, practices and projects;

(2) Data management includes a strict set of management practices and processes to ensure that business functions are effectively performed;

(3) Data management consists of multiple management teams composed of business leaders and technical experts who are responsible for implementing management norms and processes.

- Data governance

According to the International Data Management Association (DAMA), data governance is the collection of activities that exercise authority and control over the management of data assets.

The definition given in GBT34960.5-2018 Information Technology Service Governance Part 5 is: the collection of relevant control activities, performance and risk management in the process of data resources and their application. The data governance domain includes data management system and data value system. 数字化转型网(www.szhzxw.cn)

As defined by the International Data Governance Institute (DGI) : Data governance is a system that implements the division of decision rights and responsibilities through a set of information-related processes that are executed according to a consensus model that describes Who can act on What information, When and Where, with what methods and what actions.

Another explanation:

Chivalrous data governance to meet the needs of internal risk management and external regulatory compliance. A system in which decision rights and responsibilities are assigned through a series of information-related processes.

Data governance in a broad sense is the set of activities that exercise authority and control over the management of data assets (planning, monitoring, and enforcement), direct how other data management functions are performed, and enforce the data management system at a high level. A series of ongoing processes undertaken by the organization to maximize the value of data assets, clarify the responsibilities of data stakeholders, align data stakeholders’ interests, and facilitate joint data actions by data stakeholders.

The ultimate goal is to enhance the value of data, data governance is very necessary, is the foundation of enterprises to achieve digital strategy, it is a management system, including organization, system, process, tools. 数字化转型网(www.szhzxw.cn)

Here’s another explanation:

Data Governance refers to a series of concrete work that takes data as an enterprise asset, and it is the whole life cycle management of data.

Data governance is divided into data and governance from the phrase composition, and governance has the meaning of reform. Since there is a reform, it is necessary to have relevant systems, processes and tools to complete the re-sorting and classification of data to meet the requirements of data use.

The goal of data governance is to improve data quality (accuracy and integrity), ensure data security (confidentiality, integrity and availability), and realize the sharing of data resources among organizations and departments. Promote the integration, docking, sharing and comprehensive application of information resources, so as to improve the level of enterprise management and give full play to the role of information technology in operation and management.

Data governance related systems and processes will lead to data governance consulting, Such as “Data governance organizational Structure and Talent Management Plan”, “Data Governance Implementation Path”, “Data Application Scenario Implementation Path”, “Metadata management Methods and processes”, “Data standard Management Methods and processes”, “Data quality problem analysis and rectification Plan”, “Data governance Development Plan for the next N years”, etc.; Data governance tools will lead to related management systems, such as metadata management systems, data security systems, data standards systems, data quality systems, etc., and generally tend to complete the implementation and landing of current data governance based on data governance consulting results.

- Data assets

Data Asset refers to the data resources, such as documents and electronic data, that are owned or controlled by an enterprise and can bring future economic benefits to the enterprise. In the enterprise, not all data constitute data assets, data assets are data resources that can generate value for the enterprise. 数字化转型网(www.szhzxw.cn)

GBT34960.5-2018 Information Technology Services Governance Part 5: Data resources owned and controlled by an organization that can produce benefits.

Theoretical knowledge of data governance

- Data model

A Data Model, often referred to simply as a model, is an abstraction of real-world data features used to describe the concepts and definitions of a set of data. The data model describes the static characteristics, dynamic behavior and constraints of data from the level of abstraction. The content described by the data model consists of three parts: data structure, data operation (among which there is no data operation in the ER graph data model) and data constraints, which form the basic blueprint of the data structure and also the strategic map of the enterprise data assets. According to different application levels, the data model is divided into four types: subject domain data model, conceptual data model, logical data model and physical data model.

Subject domain data model: referred to as the subject domain model, is the planning blueprint of the highest perspective, and is an abstraction that synthesizes, classifies, analyzes and utilizes the data in the enterprise information system at a higher level. In general, the topic domain model is divided into business, system and department.

Conceptual data model: referred to as conceptual model, is a user-oriented and objective world oriented model, mainly used to describe the conceptual structure of the real world, and has nothing to do with the specific Database Management System (DBMS, Database Management System), generally only entity set, contact set analysis structure. 数字化转型网(www.szhzxw.cn)

Logical data model: referred to as logical model, is a conceptual model based on business lines, business matters, business processes, business scenarios based on the needs of the design of business-oriented data model, generally including specific functions and processing information. Logical models are DBMS-oriented models that guide implementation in different DBMS systems. The common forms of logical data model include network data model, hierarchical data model and so on.

Physical data model: A physical model for short, is a model for computer physical representation that describes the organization of data on a storage medium. The design of the physical model should be based on the results of the logical model to ensure that the business requirements are met. It is not only related to the specific DBMS, but also related to the operating system and hardware, so it is necessary to consider the related requirements of system performance when designing the model.

- Metamodel & Metadata

A Meta Model is a model about a model, a specification that describes a model, specifically the elements that make up the model and the relationships between them. Metamodel is a concept relative to model, without which metamodel has no meaning.

Metadata, also known as intermediary data and relay data, describes data about data, mainly describes data properties, and supports functions such as indicating storage locations, historical data, resource search, and file records. Metadata is information about the organization of data, its domains, and its relationships; in short, metadata is data about data. Metadata is divided into technical metadata, service metadata, operational metadata, and management metadata.

The relationship between data model, metamodel and metadata: The model is the abstraction of data characteristics and the theoretical basis for the formation of metamodel. Metamodel is the metadata model and the data model that stores metadata. Due to the diversity of metadata, the metamodel corresponding to different types and subclasses is not the same, and needs to be designed according to specific metadata. 数字化转型网(www.szhzxw.cn)

Technical metadata

Technical Metadata: Data that describes concepts, relationships, and rules related to a technical domain in adata system; It includes the definition of objects and data structures in the data platform, the mapping from source data to destination data, and the description of data transformation.

Technical metadata, if subdivided, can also be divided into structural technology metadata and associative technology metadata.

Structural technology metadata: Structural technology metadata provides a description of the data in the information technology infrastructure, such as where the data is stored, the type of storage of the data, and the blood relationship of the data.

Relational technology metadata: describes the relationship between data and the flow of data in the information technology environment. The scope of technical metadata mainly includes: technical rules (calculation/statistics/transformation/summary), technical description of data quality rules, fields, derived fields, facts/dimensions, statistical indicators, tables/views/files/interfaces, reports/multidimensional analysis, databases/view groups/file groups/interface groups, source code/programs, systems, software, hardware, etc. 数字化转型网(www.szhzxw.cn)

In practice, the content of technical metadata collection will be adjusted according to the specific content of different databases, such as the common tables, fields, stored procedures, functions, views of relational databases, and key-value storage databases have the concept of views and stored procedures.

Service metadata

Data that describes concepts, relationships, and rules related to the business domain in the data system; Including business terms, information classification, indicators, statistical caliber and so on. On the other hand, business metadata is the key metadata of the data warehouse environment, the way users access business data, from a variety of sources: Case Modeling tools, control databases, database catalogs, and data extraction/transformation/loading tools.

In practice, common data indicators, data elements (data elements), data labels, and report headers belong to business metadata. 数字化转型网(www.szhzxw.cn)

Operational metadata

Organization, jobs, responsibilities, processes related to metadata management, and operational data generated by the daily operation of the system. The content of operational metadata management mainly includes: the organization, post, responsibility, process, project, version related to metadata management, and the operation records in the production operation of the system, such as operation records, applications, and operation jobs.

Simply understood, operational metadata is data that describes the process of data processing.

In practice, the main data stored by the general operation metadata are: data ETL information, data processing strategy information, data processing scheduling information, data processing exception information, etc.

Management metadata

It describes the management attributes of data, including the management department, the management responsible person, etc. By clarifying the management attributes, it is conducive to the responsibility of data management to departments and individuals, and is the basis of data security management. Common management metadata include: data owner, data quality determination, data security level, and so on. 数字化转型网(www.szhzxw.cn)

In simple terms, administrative metadata is data that describes where data management belongs.

In practice, the main data stored by the general management metadata is: data ownership information (business ownership, system ownership, operation and maintenance ownership, data rights ownership), and the permission information (including data security information) of users’ access to libraries, tables, views, stored procedures, etc. created in each database.

How to define the metamodel based on different business scenarios and database types, how to collect technical metadata, business metadata, and operational metadata, etc., will be detailed in the subsequent compilation of the article “Metadata Management System Construction”.

- Data standards

Data Standards are normative constraints that ensure consistency and accuracy in the internal and external use and exchange of data. In the process of digitization, data is the real reflection of business activities in the information system. Since business objects exist in the form of data in information systems, management activities related to data standards need to be based on business, and standardize the unified definition and application of business objects in various information systems in the form of standards, so as to improve the capabilities of enterprises in business collaboration, regulatory compliance, data sharing and openness, data analysis and application.

Data standard is a standardized system reached from the three aspects of business, technology and management, and it is also the establishment of a set of standardization system that conforms to its own reality, covering the definition, operation and application of multi-level data. It includes basic class standard and index class standard. 数字化转型网(www.szhzxw.cn)

Basic class data standard

Basic data standards are designed to unify the consistency and accuracy of data related to all business activities of the organization, to solve inter-business data consistency and data integration, to solve inter-business data consistency and data integration according to the data standard management process, and to develop data standards according to the data standard management process.

The main content of the basic class data standard, including data elements, code sets, data sets, coding rules.

A Data Element, also known as a data element, is a data unit that describes its definition, identification, representation, and allowable values with a set of attributes. In a given context, it is usually used to construct a semantically correct, independent, and unambiguous information unit for a specific conceptual semantics. Data elements can be understood as the basic unit of data. The data model is composed of several relevant data elements in a certain order. The corresponding is the data element standard. 数字化转型网(www.szhzxw.cn)

A code set is a categorical encoding of data elements used to describe a basic data set of information. The CODE is a collable data set based on the classification coding of a certain code set. In general, the code is an unordered object set, containing the unique VALUE code and the corresponding value value. For extensibility, reflecting the tree CODE pattern, there will also be a parent class code. Because the term code in the understanding of business people creates the concept of developing code, sometimes the code set is changed to the code set, corresponding to the coding standard.

Index class data standards

Index data standards are generally divided into basic index standards and calculated indicators (also known as composite indicators). Basic indicators have specific business and economic meanings and can be obtained only through the processing of basic data. The calculated indicators are usually calculated by more than two basic indicators. 数字化转型网(www.szhzxw.cn)

- Data quality

Data Quality is the basis to ensure the application effect of data, and it is an indicator to describe the value content of data.

There are many index systems to measure data quality, typical indicators are: Integrity (whether the data is missing), normalization (whether the data is stored according to the required rules), consistency (whether the data values have conflicting information meanings), accuracy (whether the data is wrong), uniqueness (whether the data is duplicated), timeliness (whether the data is uploaded according to the time requirements).

Data quality problems are usually looked for from the technical side, the business side, and the management side.

Technical aspect

In terms of technology, data quality problems are generally found at various points in the whole data life cycle of database table design, data production, data collection, data transmission, data loading and data storage. 数字化转型网(www.szhzxw.cn)

Database table design: Unreasonable design of table structure, field constraints, and data verification rules during the construction of the business system, resulting in no or improper verification of data entry, causing data duplication, inaccuracy, and incompleteness.

Data production process: refers to the production data generated by the business system, the data write permission is not controlled in the business system, the data collection page is not verified, the data submission is not restricted, the logic between the data is not controlled, and the data is repeated, inaccurate, and inconsistent. Each business system is common or dependent on data without unified management, each business system is independent, chimney construction system, resulting in data inconsistency between systems.

Data collection process: Data is obtained through API, DB Link and other methods, and unreasonable Settings of collection point, collection frequency, collection content, mapping relationship, collection parameters and process result in low data collection efficiency, collection failure, data loss, data mapping and conversion failure, etc. 数字化转型网(www.szhzxw.cn)

Data transmission link: The network is uncontrollable and the data transmission process is not encrypted, resulting in data quality problems caused by data tampering and loss in the data transmission link.

Data processing links: refers to ETL, data development and other ways, in the preparation of data cleaning rules, data conversion rules, data loading rules, do not do reasonable restrictions, verification and other ways, resulting in data duplication, mapping errors and other problems.

Data storage link: unreasonable data storage area Settings, artificial data adjustment on the data storage, causing data loss, invalid, distortion, duplication and other problems.

Business aspect

On the business side, data quality problems are caused by unclear requirements, frequent changes in requirements, non-standard data input formats, and data fraud.

Unclear requirements: business rules, business processes, and business collection information items are not clear, which affects the unreasonable data model built in the design process, and then leads to data quality problems in the data production process.

Frequent changes in demand: It is generally due to unclear requirements that lead to frequent changes in demand, affecting all aspects of the data at the technical level. In the case of frequent changes, slight negligence or unreasonable design or incorrect data migration logic lead to frequent data quality problems and difficult governance. 数字化转型网(www.szhzxw.cn)

Non-standard data input format: Generally, it mainly applies to the input scenario of a large range of content data. Due to the lack of attention to the upper and lower case, full half Angle, and special characters of the input content, data distortion and data loss are caused.

Data fraud: In order to improve or reduce the assessment indicators, or quickly complete the relevant data collection work, the operator processed some data when entering, so that the authenticity of the data could not meet the quality requirements.

Management aspect

In terms of management, it is mainly due to weak cognition of data quality, lack of or failure to implement data quality systems, lack of data accountability and data assessment mechanisms, resulting in data quality problems caused by lack of data management.

Data quality cognition: do not recognize the importance of data quality, pay attention to system construction lack of attention to data production, think that the system is omnipotent, poor data quality does not matter. 数字化转型网(www.szhzxw.cn)

Data quality system: There is no unified process and system support for data quality problems from input, discovery, assignment, processing and optimization, resulting in non-standard data, data loss, data conflict and other problems in data production. There is no control and management for subsequent data discovery, indicators, processing and optimization, and there is no corresponding data recognition and assessment mechanism to restrict behavior when data problems occur. As a result, the whole data quality problem does not form a closed loop.

The influence of data quality can also be analyzed from objective factors and subjective factors. In the process of data flow, data quality problems are caused by objective factors such as system anomalies and improper process Settings. In the process of data processing, subjective factors such as low data awareness and management defects cause data quality problems caused by improper operation.

- Data exchange

In the scenario based on Data center, data warehouse, and data governance, Data Switching does not refer to the process of establishing a temporary interconnect path for data communication between any two terminal devices (Dtes). It refers to the integration of data in a number of decentralized application information systems, so that several application subsystems can transmit and share information/data, improve the utilization rate of information resources, and become the basic goal of information construction to ensure the interconnection between distributed and heterogeneous systems.

In simple understanding, the current data exchange mainly applies the data generated by the system to complete the interconnection between heterogeneous databases (sources) through data unloading and data loading. Common data exchange modes are library to library, library to file, file to library, file to file.

- Data services

Data Service (Data Service) is the ability to provide all-enterprise-level data services, through the service packaging, to provide data to business systems in the form of service interfaces.

In addition to integrating data services in various places to achieve unified docking and export of data services, data apis can also be configured based on data services. Through unified access and unified management, enterprise-level data services can be issued, applied for, docking and invocation, authentication, monitoring, and traffic limiting management and control, so as to achieve unified management and control of data services. 数字化转型网(www.szhzxw.cn)

Data services provide secure and unified data for data users from the system application level.

- Data life cycle

Everything has a certain life cycle, and data is no exception. Data Life Cycle refers to the generation, processing, use and even extinction of data. Based on a scientific management method, the data that is rarely or no longer used is separated from the system and retained through verified storage devices, which can not only improve the operation efficiency of the system, but also better serve customers. It can also significantly reduce storage costs caused by long-term data storage.

The data life cycle generally includes the online stage, the archiving stage (sometimes further divided into online archiving stage and offline archiving stage), and the destruction stage. The management content includes the establishment of reasonable data categories, and the formulation of retention time, storage media, cleaning rules and methods, and precautions for different types of data at each stage.

- Data development

Data Development refers to the activities of building unified and standardized tool capabilities for the whole process around the whole life cycle of data, and conducting unified management of data model design, data processing program development, testing, and launching. In general, data development includes offline development and real-time development.

Offline development, also known as offline data development, refers to the processing of data yesterday or more ago by compiling data processing expressions, the time unit is usually days, hours.

Real-time development, also known as real-time data development, processes data received immediately, the timeliness mainly depends on the transmission and storage speed, the time unit is usually seconds, milliseconds. 数字化转型网(www.szhzxw.cn)

- Data security

Data Security The security of technology and management established and adopted for data processing systems to protect computer hardware, software, and data from damage, alteration, and disclosure for accidental or malicious reasons. Therefore, the security of computer network can be understood as: through the use of various technical and management measures, the normal operation of the network system, so as to ensure the availability, integrity and confidentiality of network data.

Data classification catalog, also known as data catalog, refers to the process of distinguishing and classifying organizational data according to certain principles and methods according to the attributes or characteristics of the organization, and establishing a certain classification system and arrangement order in order to better manage and use organizational data.

Data catalog is a key part of data protection work, is the basis for establishing a unified, accurate and perfect data architecture, is the basis for realizing centralized, specialized and standardized data management, and is also an important data dependence for data asset inventory.

Graph data classification (Example)

Data classification, also known as sensitive classification, refers to the process of distinguishing the importance and sensitivity differences of data using standardized and clear methods on the basis of data classification, and grading it according to certain classification principles, so as to provide support for the establishment of security policies for data opening and sharing.

Figure 3 Sorting out data levels based on the Data Security Grading Guide for Financial Data Security (example) 数字化转型网(www.szhzxw.cn)

In static desensitization, data is extracted for desensitization and sent to the test library. The desensitized data is isolated from the production environment to meet service requirements and ensure the security of the production database. Static desensitization is an irreversible action, which can be summarized as “moving and simulating replacement” of data.

Dynamic desensitization, based on desensitization rules, desensitizes the query and call results of sensitive data in real time to ensure the availability and security of returned data. Dynamic desensitization can be summarized as “desensitization while using”.

Theoretical knowledge of data assets

- Service data

Business Data is the data generated in the process of business processing or transaction processing, also known as transaction data. There are mainly three types of business data generation: First, the data generated in the process of business transactions, such as: planning order, sales order, production order, purchase order, etc., most of these data are generated manually; 2. Data generated by the system, including hardware running status, software running status, resource consumption status, application usage status, interface call status, service health status, etc.; Third, the data generated by automation equipment, the operation data of various types of equipment in the IOT Internet, production collection data, and so on. No matter where the source, the data here has a common feature is strong timeliness, high response, and large amount of data. 数字化转型网(www.szhzxw.cn)

- Master data

Master Data refers to the data used to describe the core business entity of the enterprise, and is the execution subject of the enterprise’s core business object and transaction business. It is the high-value basic data that is repeated and shared across the entire value chain and applied to multiple business processes, across various business departments and systems, and is the basis for data interaction between various business applications and systems. From a business perspective, master data is relatively “fixed” and changes slowly. Master data is the nerve center of enterprise information system and the basis of business operation and decision analysis. For example, customers, corporate organizations and employees, products, channels, subjects, etc.

- Data value

Data Value is the measurement of the intrinsic value of data, which can be carried out from the two aspects of data cost and data application value. Data costs generally include collection, storage and calculation costs (labor costs, IT equipment and other direct costs and indirect costs, etc.) and operation and maintenance costs (business operation costs, technical operation costs, etc.). The application value of data is mainly measured from the classification of data, frequency of use, object of use, effect of use and sharing circulation.

- Asset catalog

A Data Asset Catalog (hereinafter referred to as an asset catalog) is a catalog system that extracts valuable data that can be used for analysis and application. Compiling data asset catalog is mainly to establish the relationship between business scenarios and data resources, and reduce the threshold of understanding system data. 数字化转型网(www.szhzxw.cn)

Correlation

- Relationship between data management, data governance and data assets

Data management includes data governance, and the concept that governance is part of overall data management has been widely recognized by the industry. Data management encompasses a number of different areas, one of the most prominent being data governance. Data assets are based on data governance, and the core is how to realize data value, reflect data value, and complete data empowerment. The relationship among data management, data governance and data asset management is shown in the figure.

Figure 4 Relationships among data management, data governance, and data asset management

- Data governance framework

GB/T34960 “Information Technology Service Governance” Part 5 mentioned that the data governance framework includes four parts: top-level design, data governance environment, data governance domain and data governance process. 数字化转型网(www.szhzxw.cn)

Figure 5 Data governance framework

Top-level design includes data-related strategic planning, organization building, and architecture design, and is the foundation of data governance implementation. Data governance environment includes internal and external environment and contributing factors, which is the guarantee of data governance implementation. Data governance domain includes data management system and data value system, and is the object of data governance implementation. The data governance process, which includes planning and planning, building and running, monitoring and evaluation, and improvement and optimization, is the method of data governance implementation.

In the data governance domain, the main organization of the data management system should focus on data standards, data quality, data security, metadata management and data life cycle, etc., to carry out the governance of the data management system, including at least: a) assess the status quo and ability of data management, analyze and evaluate the maturity of data management; b) Guide the implementation of the data management system governance plan to meet data strategy and management requirements; c) Monitor the performance and compliance of data management and continuously improve and optimize it. 数字化转型网(www.szhzxw.cn)

Data value system The main organization should focus on data circulation, data services and data insights, and conduct data asset operation and application governance, including at least: a) evaluate the operation and application capabilities of data assets, and support the transformation and realization of data value; b) Guide the implementation of the data value system governance scheme to meet the operation and application requirements of data assets; c) Monitor the performance and compliance of data value realization, and continuously improve and optimize.

- The relationship between data governance, data assets and data

From the data level, the data system includes three parts: governance, management and application. Governance is responsible for solving the problems between people, people and data, management is responsible for various functional areas, and application is the realization of data value. According to these three dimensions, data governance focuses on governance, which generally includes data governance consultation and data governance implementation, and is the combination of data in governance and management. Data assets focus on assets, generally focusing on the value of data and the application of data, based on data asset inventory and value analysis, show the value of data assets and provide data applications.

In other words, data governance is the set of activities (planning, monitoring and execution) that exercise authority and control over data at a high level, and data assets focus on discovering the value of data, helping enterprises develop and improve their operational capabilities by providing the ability to apply data. 数字化转型网(www.szhzxw.cn)

Data is the raw material of enterprise informatization, and data governance is the cornerstone of enterprise informatization. Data assets are based on the data of data governance, mining the value of data, empowering enterprises through data operation and data analysis, and helping the take-off of enterprise informatization.

本文由数字化转型网(www.szhzxw.cn)转载而成,来源于滴答数据;编辑/翻译:数字化转型网宁檬树。