参加多人视频会议,结果只有自己是真人,这事听上去似乎匪夷所思,却真实地发生了。

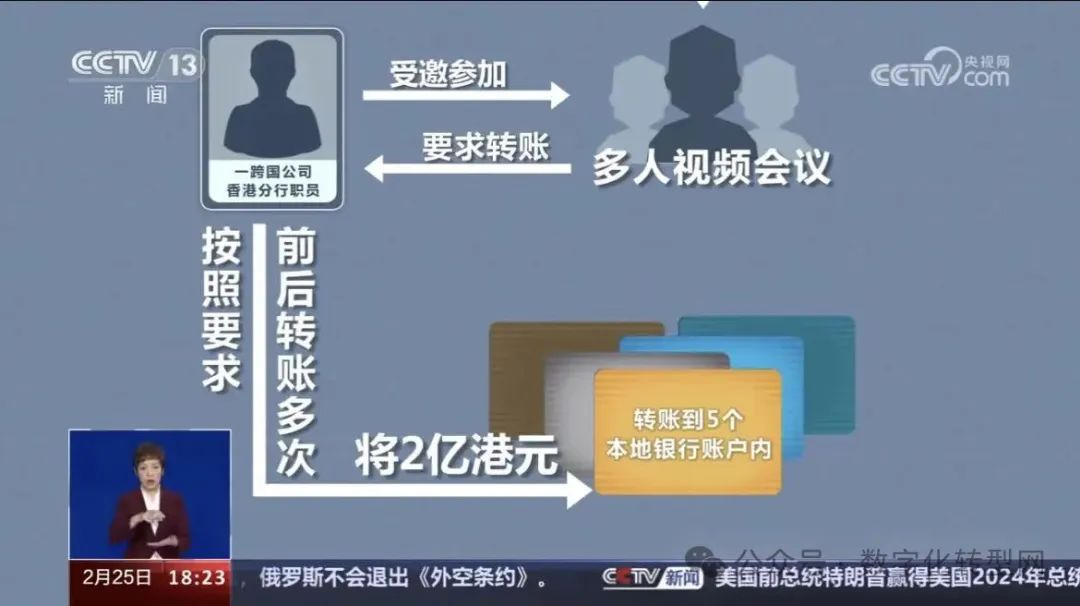

据央视新闻报道,近期,香港警方披露了一起,AI“多人换脸”诈骗案,涉案金额高达2亿港元。

在该起案件中,一家跨国公司香港分部的职员,受邀参加总部首席财务官发起的多人的视频会议。并按照要求,前后转账多次,将2亿港元转账到5个本地银行账户内,其后向总部查询方知受骗。警方调查得知,这起案件中,所谓的视频会议中,只受害者一人为“真人”,其余所谓参会人员,全部是经过AI换脸后的诈骗人员。 数字化转型网(www.szhzxw.cn)

近期,在陕西西安也发生了一起“AI换脸”诈骗案例▼

陕西西安财务人员张女士与老板视频通话,老板要求她转账186万元到一个指定账号。

被害人 张女士:老板让把这个款赶紧转过去,这个款非常着急,因为他声音还有视频图像都跟他人长得一样的,所以就更确信这笔款是他说的了,然后我直接就把这笔款转了。

转账之后,张女士按照规定将电子凭证发到了公司财务内部群里,然而出乎意料的是,群里的老板看到信息后,向她询问这笔资金的来由?

被害人 张女士:然后我们就打电话再跟老板去核实,老板说他没有给我发过视频,然后也没有说过这笔转账。

意识到被骗的张女士连忙报警求助,警方立刻对接反诈中心、联系相关银行进行紧急止付,最终保住了大部分被骗资金156万元。

看完这两起案例,您一定有些好奇,AI换脸背后的技术原理到底是什么?在技术层面,它是如何实现人脸的精确识别与替换,创造出逼真效果?我们来听听专家的讲解。

一、技术上如何实现人脸精确识别与替换

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:AI换脸过程主要包括人脸识别追踪、面部特征提取、人脸变换融合、背景环境渲染、图像与音频合成等几个关键步骤。其背后最核心的包括为三个部分,首先,利用深度学习算法精准地识别视频中的人脸图像,并提取出如眼睛、鼻子、嘴巴等关键面部特征。其次,将这些特征与目标人脸图像进行匹配、替换、融合。最后,通过背景环境渲染并添加合成后的声音,生成逼真度较高的虚假换脸视频。 数字化转型网(www.szhzxw.cn)

二、快速AI“换脸”仅通过一张照片就可完成

为了了解AI换脸到底能有多么逼真,记者经过与专业技术人员合作,深度体验了AI换脸技术。

技术人员首先用手机给记者拍了一张脸部照片,导入到AI人工智能软件后,让记者惊讶的是,虽然电脑摄像头前的是技术人员,但是输出的确是记者的照片,几乎可以说是“一键换脸”,不需要复杂的环境和解压操作。

更令人惊讶的是,随着技术人员面部表情变化,照片上记者的脸也跟着一起发生了相应变化。

记者:为什么技术人员这张脸动,我的照片会跟着动呢?

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:首先通过视频的采集,能够把图片里这个人脸的面部追踪定位到,定位到以后第二步他能够做一个人脸的面部特征点的采集和提取,主要就是包括嘴、鼻子跟眼睛相关的这些明显的面部特征。采集到以后, 第三步就跟把这张原始的照片,做一个变换跟融合跟整形。

记者:通过这张照片还可以做到什么?

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:当前通过这张照片技术人员已经让这张照片能够动起来,活起来了,而如果更进一步的将这张照片存下来,能够存储大量的照片的话。后期可以把这张照片合成一段简短的视频发布出来。

人工智能人脸检测技术主要通过深度学习算法实现,这种技术能够识别出面部特征并对其进行精准的分析。可以将一个人的面部表情从一张照片或视频中提取出来,并将其与另一个人的面部特征进行匹配。专家告诉记者,如果想要实现视频实时通话时采用人工智能AI换脸技术,一张照片是远远不够的,那就需要不同角度的近千张照片的采集。 数字化转型网(www.szhzxw.cn)

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:如果要实时点对点交流的话,需要再采集更多的照片,完了进行深度学习算法模型的训练,训练出来这个模型以后灌到咱们这个视频里去,就可以做实时的变化跟转换了。这种情况下,就可以做到实时的变脸。声音的交流也可以,需要预先采集一些咱们目标人群当中的声音,然后进行模型的训练,能够把目标人群的声音还原出来。

三、AI生成仿真度极高视频 难度高投入大

专家介绍,以诈骗为目的,实施点对点视频通话,需要AI人工智能生成仿真度极高的视频。想要达到以假乱真效果用于诈骗,难度不小。

中国计算机学会安全专业委员会数字经济与安全工作组成员 方宇:针对诈骗,其实主要是通过点对点的视频通话,这时候如果采用换脸技术和声音合成做实时的换脸诈骗的话,想要完成这些技术操作,就需要投入很强的技术支持。

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:背后需要有大量的资金的投入,包括图片的采集,包括专业算法的人员等等,需要很长的一个周期,包括一些算力算法。各方面的投入,需要长时间不断地迭代,进行操作,才能达到一个相当逼真的效果,才有可能实现到诈骗的实际效果。

四、目前AI“换脸”更多应用于娱乐视频录制

除了一些不法分子企图利用AI技术实施诈骗,事实上,近年来,AI技术更多地被应用于短视频的二次创作,属于娱乐性质,越来越多的名人AI换脸视频出现在网络上,不少网友感叹,这类视频“嘴型、手势都对得上,太自然了,差点儿以为是真的。” 数字化转型网(www.szhzxw.cn)

比如这款软件,拍下记者面部照片后,就能录制生成出一段记者秒变赛车手的视频。

▲网友发布的女士系丝巾原版视频

▲需要变换的脸的照片

▲生成视频

中国计算机学会安全专业委员会数字经济与安全工作组成员 方宇:AI技术目前我们常看到的主要是短视频换脸,通过做一些特定的动作,跳舞啊等等。这些视频其实看起来是有一些不自然的,本身也是纯属于娱乐性质的换脸。

记者发现在手机应用商城中,有数十款换脸软件,都可以做到换脸的目的。

中国网络空间安全协会人工智能安全治理专委会专家 薛智慧:如果从娱乐大众的角度来说的话,现在市面上也有很多的这些软件和工具,能够达到AI换脸的效果,但是仿真度只有六七分的样子,大众直接就能看出来。但是如果要生成一个诈骗视频来说话,就要生成咱们仿真度极高的这种点对点视频。

五、AI技术“换脸换声”可能存在法律风险

不过,AI技术也是把“双刃剑”,即使是出于娱乐使用AI换脸、AI换声,也是存在法律风险的。法律专家表示,用AI技术为他人换脸换声甚至翻译成其他语言并发布视频,可能涉嫌侵权,主要有三个方面:

一是涉嫌侵犯著作权,例如相声、小品等都属于《中华人民共和国著作权法》保护的“作品”。例如网友用AI软件将相声、小品等“翻译”成其他语言,需经过著作权人授权,否则就存在侵权问题。

二是涉嫌侵犯肖像权,根据《中华人民共和国民法典》,任何组织或者个人不得以丑化、污损,或者利用信息技术手段伪造等方式侵害他人的肖像权。未经肖像权人同意,不得制作、使用、公开肖像权人的肖像,但是法律另有规定的除外。 数字化转型网(www.szhzxw.cn)

三是涉嫌侵犯声音权,根据《中华人民共和国民法典》规定,对自然人声音的保护,参照适用肖像权保护的有关规定。也就是说,需要取得声音权人的同意,才能够使用他人的声音。

六、学会几招 轻易识别AI“换脸换声”

AI换脸这一技术的出现,导致耳听为虚、眼见也不一定为实了。那我们该如何防范呢?专家表示,其实AI人工换脸无论做得多么逼真, 想要识别视频真假还是有一些方法的。

中国计算机学会安全专业委员会数字经济与安全工作组成员 方宇:实际上从我目前看到的深度伪造的实时视频上来看,其实还是可以通过一些方式,去进行一些验证。

那比如说我们可以要求对方在视频对话的时候呢,在脸部的面前通过挥手的方式,去进行一个识别,实时伪造的视频,因为它要对这个视频进行实时的生成和处理和AI的换脸。

那么在挥手的过程中,就会造成这种面部的数据的干扰,最终产生的效果就是我们看到的这样,挥手的过程中,他所伪造的这个人脸会产生一定的抖动或者是一些闪现,或者是一些异常的情况。

第二个就是点对点的沟通中可以问一些只有对方知道的问题,验证对方的真实性。

七、提高防范意识 避免个人生物信息泄露

专家表示,除了一些辨别AI换脸诈骗的小诀窍,我们每一个人都应该提高防范意识,在日常生活中也要做好相关防范措施,养成良好的上网习惯。首先应该是做好日常的信息安全的保护,加强对人脸、声纹、指纹等生物特征数据的安全防护:另外做好个人的手机、电脑等终端设备的软硬件的安全管理。第二,不要登录来路不明的网站,以免被病毒侵入。第三,对可能进行声音、图像甚至视频和定位采集的应用,做好授权管理。不给他人收集自己信息的机会,也能在一定程度上让AI换脸诈骗远离自己。

八、伴随AI技术发展 需要多层面监管规范

除了要提高自我防范意识,如何对AI技术加强监管,也成了越来越多人关注的问题。AI技术本身不是问题,关键是我们要怎么用它?如何形成有效监管?专家介绍,AI技术发展,需要多层面监管规范。

一是在源头端,需要进一步加强公民个人信息保护,尤其是加强对生物特征等隐私信息的技术、司法保护力度。二是在技术层面可以加强管理。例如可以让视频传播网站或者社交软件,使用专业的鉴别软件来鉴定,对AI生成视频,打上不可消除的“AI生成”水印字样。目前,这种数字水印鉴伪技术有待进一步普及。三是在法律制度层面,要进一步完善人工智能等领域相关法律法规。2023年8月15日,我国正式施行《生成式人工智能服务管理暂行办法》。《办法》从多个方面“划下红线”,旨在促进生成式人工智能健康发展和规范应用。 数字化转型网(www.szhzxw.cn)

翻译:

Men attend multi-person meetings, only themselves are real people!

Taking part in a multi-person video conference and ending up with the only person in the room may sound bizarre, but it actually happens.

According to CCTV news, recently, the Hong Kong police disclosed an AI “multiple face change” fraud case involving up to HK $200 million.

In this case, employees of the Hong Kong branch of a multinational company were invited to participate in a multi-person video conference initiated by the chief financial officer of the headquarters. In accordance with the requirements, he transferred HK $200 million to five local bank accounts several times before and after, and then asked the headquarters to know that he had been cheated. The police investigation learned that in this case, the so-called video conference, only one victim is a “real person”, and the rest of the so-called participants are all scam personnel after AI face change.

Recently, there was also an “AI face change” fraud case in Xi ‘an, Shaanxi Province

Shaanxi Xi ‘an financial staff Ms. Zhang video call with the boss, the boss asked her to transfer 1.86 million yuan to a designated account. 数字化转型网(www.szhzxw.cn)

The victim Ms. Zhang: The boss asked to transfer this money quickly, this money is very anxious, because his voice and video images are the same as others, so I am more convinced that this money is what he said, and then I directly transferred this money.

After the transfer, Ms. Zhang sent the electronic voucher to the company’s financial internal group in accordance with the regulations, but unexpectedly, the boss of the group saw the information and asked her about the source of the funds.

Victim Ms. Zhang: Then we called the boss to verify, the boss said he did not send me the video, and then did not say this transfer.

Realizing that she had been cheated, Ms. Zhang quickly called the police for help, and the police immediately docking the anti-fraud center and contacting relevant banks for emergency stop payments, and eventually saved most of the cheated funds of 1.56 million yuan.

After reading these two cases, you must be a little curious, what is the technical principle behind AI face change? At the technical level, how does it achieve accurate recognition and replacement of faces to create realistic effects? Let’s hear from an expert.

First, how to achieve accurate face recognition and replacement technically

China Cyberspace Security Association Artificial Intelligence Security governance expert Xue Wisdom: AI face change process mainly includes face recognition tracking, facial feature extraction, face transformation fusion, background environment rendering, image and audio synthesis and other key steps. The core behind it includes three parts, first, the use of deep learning algorithms to accurately identify the face image in the video, and take out key facial features such as eyes, nose, mouth and so on. Secondly, these features are matched, replaced and fused with the target face image. Finally, the background environment is rendered and the synthesized sound is added to generate a fake face-changing video with high fidelity. 数字化转型网(www.szhzxw.cn)

Second, the rapid AI “face change” can be completed only through a photo

In order to understand how realistic AI face changing can be, the reporter has deeply experienced AI face changing technology through cooperation with professional and technical personnel.

Technicians first took a photo of the face of the reporter with a mobile phone, and after importing it into the AI artificial intelligence software, the reporter was surprised that although the computer camera is a technician, the output is indeed a photo of the reporter, which can almost be said to be “one-click face change”, and does not require a complex environment and decompression operation.

More surprisingly, as the technician’s facial expressions changed, so did the reporter’s face in the photo.

Reporter: Why does the technician’s face move and my photo move?

China Cyberspace Security Association artificial Intelligence security governance expert Xue Wisdom: First of all, through the collection of video, can track the face of the face in the picture to locate, locate the second step he can do the collection and extraction of facial features of a face, mainly including the mouth, nose and eyes related to these obvious facial features. After the collection, the third step is to follow the original photo, do a transformation, fusion and plastic.

Reporter: What else can you do with this photo?

China Cyberspace Security Association artificial Intelligence security governance expert Xue Zhihui: Currently through this photo technicians have made this photo can move, live, and if you further save this photo, you can store a large number of photos. Later, you can synthesize this photo into a short video and post it.

Artificial intelligence face detection technology is mainly implemented through deep learning algorithms, which can recognize facial features and analyze them accurately. One person’s facial expression can be extracted from a photo or video and matched with another person’s facial features. Experts told reporters that if you want to achieve real-time video calls using artificial intelligence AI face change technology, a photo is far from enough, it requires the collection of nearly a thousand photos from different angles.

China Cyberspace Security Association artificial Intelligence security governance expert Xue Zhihui: If you want real-time point-to-point communication, you need to collect more photos, after the training of deep learning algorithm model, after training this model into our video, you can do real-time changes and transformations. In this case, you can change your face in real time. Voice communication is also possible, we need to collect some voices of our target population in advance, and then train the model to restore the voice of the target population. 数字化转型网(www.szhzxw.cn)

Third, it is difficult for AI to generate videos with high degree of imitation

Experts said that for the purpose of fraud, the implementation of point-to-point video calls requires AI artificial intelligence to generate videos with a high degree of imitation. It is difficult to achieve a fake effect for fraud.

Fang Yu, member of the digital economy and security working group of the China Computer Society Security Professional Committee: For fraud, in fact, it is mainly through point-to-point video calls, at this time if you use face changing technology and voice synthesis to do real-time face changing fraud, you want to complete these technical operations, you need to invest strong technical support.

China Network Security Association artificial Intelligence security governance expert Xue Zhihui: Behind the need for a large amount of capital investment, including picture collection, including professional algorithm personnel, etc., it takes a long cycle, including some computing algorithms. The investment in all aspects requires a long time to continuously iterate and operate in order to achieve a fairly realistic effect, and it is possible to achieve the actual effect of fraud.

fourth, at present, AI “face change” is more used in entertainment video recording

In addition to some criminals attempting to use AI technology to commit fraud, in fact, in recent years, AI technology has been more applied to the secondary creation of short videos, which belong to the nature of entertainment, more and more celebrity AI face changing videos appear on the network, many netizens lamented that such videos “mouth shape, gestures are on, too natural, almost thought it was true.”

This software, for example, takes a photo of a journalist’s face and generates a video of the journalist turning into a race car driver. 数字化转型网(www.szhzxw.cn)

▲ The original video of a lady wearing a silk scarf released by netizens

▲ Photos of faces that need to be transformed

▲ Generate video

Fang Yu, member of the digital economy and security working group of the Security Professional Committee of the China Computer Society: At present, the AI technology we often see is mainly short videos to change faces, by doing some specific actions, dancing and so on. These videos actually look a little unnatural, and are purely recreational face swaps.

The reporter found that in the mobile app store, there are dozens of face change software, which can achieve the purpose of face change.

China Cyberspace Security Association artificial Intelligence security governance expert Xue Wisdom: If from the point of view of entertainment, there are now a lot of these software and tools on the market, can achieve the effect of AI face change, but the degree of imitation is only six or seven points, the public can see it directly. But if you want to generate a fraud video to talk, you need to generate this peer-to-peer video with our high degree of imitation.

Fifth, there may be legal risks in AI technology “changing faces and voices”

However, AI technology is also a “double-edged sword”, even if it is for entertainment to use AI to change faces and AI to change voices, there are legal risks. Legal experts said that using AI technology to change the faces and voices of others or even translate them into other languages and publish videos may be suspected of infringement, mainly in three aspects:

First, it is suspected of copyright infringement, such as crosstalk, skits, etc. are “works” protected by the Copyright Law of the People’s Republic of China. For example, when netizens use AI software to “translate” crosstalk and skits into other languages, they need to be authorized by the copyright owner, otherwise there will be infringement problems. 数字化转型网(www.szhzxw.cn)

Second, suspected infringement of portrait rights, according to the People’s Republic of China Civil Code, any organization or individual may not vilify, deface, or use information technology to forge and other ways to infringe others’ portrait rights. Without the consent of the portrait right holder, no portrait of the portrait right holder shall be made, used or made public, except as otherwise provided by law.

Third, suspected infringement of voice rights, according to the provisions of the Civil Code of the People’s Republic of China, the protection of the voice of natural persons, with reference to the relevant provisions of the protection of portrait rights. In other words, it is necessary to obtain the consent of the voice rights holder to use another person’s voice.

Sixth, learn a few tricks to easily identify AI “face change voice”

The emergence of AI face-changing technology has led to false hearing and inconsistent seeing. So how do we prevent it? Experts said that in fact, no matter how realistic the AI artificial face change is, there are still some ways to identify the true and false of the video.

Fang Yu, member of the Digital economy and Security working group of the Security Professional Committee of the China Computer Society: In fact, from the real-time video of deep forgery that I have seen so far, in fact, it is still possible to carry out some verification in some ways.

So for example, we can ask the other party to recognize and fake a video in real time by waving hands in front of the face during the video conversation, because it needs to generate and process the video in real time and change the face of the AI.

Then in the process of waving, it will cause interference with the data of this face, and the final effect is what we see, in the process of waving, the face he forged will produce a certain shake or some flashes, or some abnormal situations. 数字化转型网(www.szhzxw.cn)

The second is that in point-to-point communication, you can ask some questions that only the other party knows to verify the authenticity of the other party.

Seventh, improve prevention awareness to avoid personal biological information disclosure

Experts said that in addition to some tips to identify AI face fraud, each of us should improve our awareness of prevention, and take relevant preventive measures in daily life to develop good Internet habits. First of all, we should do a good job of daily information security protection, strengthen the security protection of biometric data such as face, voice print, fingerprint: in addition, do a good job of personal mobile phones, computers and other terminal equipment hardware and software security management. Second, do not log in to unknown websites, so as not to be invaded by viruses. Third, do a good job of authorization management for applications that may capture sound, images, or even video and positioning. Not giving others the opportunity to collect their information can also, to a certain extent, keep AI face-changing fraud away from themselves.

Eighth, the development of AI technology requires multi-level regulatory norms

In addition to improving self-prevention awareness, how to strengthen supervision of AI technology has become a problem of increasing concern. AI technology itself is not the problem, the key is how do we use it? How to form effective supervision? According to experts, the development of AI technology requires multi-level regulatory norms. 数字化转型网(www.szhzxw.cn)

First, at the source, it is necessary to further strengthen the protection of citizens’ personal information, especially the technical and judicial protection of biometric and other private information. Second, management can be strengthened at the technical level. For example, video communication websites or social software can be identified using professional authentication software to identify AI-generated videos and mark them with irremovable “AI-generated” watermarks. At present, this digital water seal technology needs to be further popularized. Third, at the legal system level, it is necessary to further improve the relevant laws and regulations in artificial intelligence and other fields. On August 15, 2023, China officially implemented the Interim Measures for the Management of Generative Artificial Intelligence Services. The Measures “draw red lines” from many aspects, aiming to promote the healthy development and standardized application of generative artificial intelligence.

由数字化转型网(www.szhzxw.cn)转载而成,来源于央视新闻端;编辑/翻译:数字化转型网宁檬树。

免责声明: 本网站(http://www.szhzxw.cn/)内容主要来自原创、合作媒体供稿和第三方投稿,凡在本网站出现的信息,均仅供参考。本网站将尽力确保所提供信息的准确性及可靠性,但不保证有关资料的准确性及可靠性,读者在使用前请进一步核实,并对任何自主决定的行为负责。本网站对有关资料所引致的错误、不确或遗漏,概不负任何法律责任。

本网站刊载的所有内容(包括但不仅限文字、图片、LOGO、音频、视频、软件、程序等) 版权归原作者所有。任何单位或个人认为本网站中的内容可能涉嫌侵犯其知识产权或存在不实内容时,请及时通知本站,予以删除。