生成式人工智能(AIGC, Artificial Intelligence Generated Content)是指基于生成对抗网络、大型预训练模型等人工智能的技术方法,通过已有数据的学习和识别,以适当的泛化能力生成相关内容的技术。AIGC技术的核心思想是利用人工智能算法生成具有一定创意和质量的内容。通过训练模型和大量数据的学习,AIGC可以根据输入的条件或指令,生成与之相关的内容。

AIGC的发展最早可追溯到1950年艾伦•图灵(Alan Turing)在其论文《计算机器与智能(Computing Machinery and Intelligence)》中提出了著名的“图灵测试”,给出了判定机器是否具有“智能”的试验方法,即机器是否能够模仿人类的思维方式来“生成”内容,1957年莱杰伦·希勒(Lejaren Hiller)和伦纳德·艾萨克森(Leon-ard saacson)完成了人类历史上第一支由计算机创作的音乐作品就可以看作是 AIGC的开端。经过半个多世纪的快速发展,随着具有价值的有效数据大量积累、算力的大幅提升,以及深度学习算法的提出与应用,今天的人工智能技术已经普及到许多的行业,并在相应的场景中发挥着重要的作用,比如自然语言处理(NLP, Natural Language Processing)、计算机视觉、推荐系统、预测分析等,颠覆相应场景下的生产与生活等方式,为人类社会带来了极大的便捷中发挥着重要作用。

本文以AIGC工程应用的视角出发探讨国内外AIGC的发展现状与趋势,在此基础上对相应当前的AIGC国内外应用产品进行归类,并详细介绍AIGC技术架构体系,最后给出了AIGC面临的机遇与挑战。

一、以ChatGPT为代表的AIGC发展现状

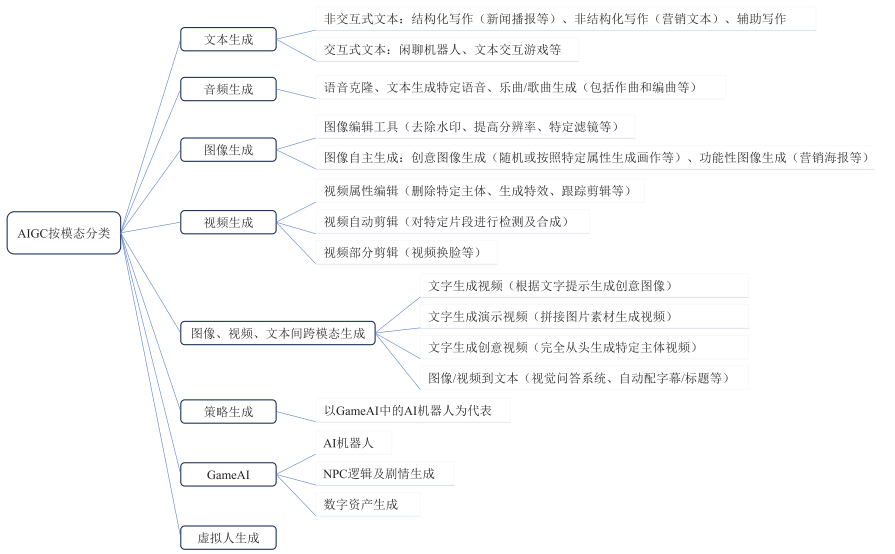

随着ChatGPT的热潮持续升温,根据51GPT网站的统计,截至目前,全世界当前已有2 722个AIGC类的应用工具。这些工具的不断涌现,进一步加速推动了AIGC的商业化落地,使得产业生态链逐步走向完善。如图1所示,当前,AIGC产品主要模态包括音频生成、视频生成、文本生成、图像生成,以及图像、视频、文本间的跨模态生成等几种方式。下面分别从国外、国内两方面来看AIGC的应用发展现状和趋势。

1. 国外AIGC应用发展现状

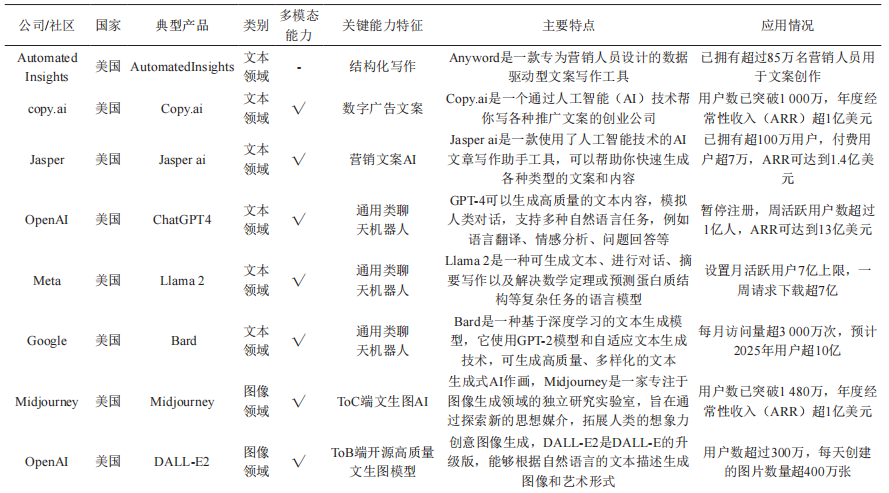

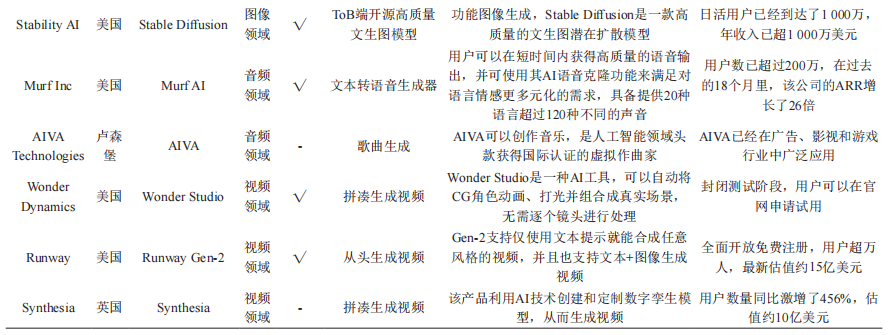

结合相关文献和调研报告,将国外主要AIGC头部应用产品按照文本、图像、音频、视频进行分类,相应具有代表性的如表1所示。

表1 国外典型的AIGC类产品及其特点

从表1可知,国外AIGC应用产品主要以美国为主,绝大部分产品均具有多模态能力,这也是当前AIGC产品演进和落地的重要方向。从技术上来看,生成算法、预训练模型、多模态等AI技术的累积与融合,才催生了AIGC的大爆发,其中生成算法主要包括Transformer、基于流的生成模型(Flow-based models)、扩散模型(Diffusion Model)等深度学习算法。 数字化转型网(www.szhzxw.cn)

按照基本类型分类,预训练模型主要包括:(1)NLP预训练模型,如谷歌的LaMDA和PaLM、Open AI的GPT系列;(2)计算机视觉(CV)预训练模型,如微软的Florence;(3)多模态预训练模型,即融合文字、图片、音视频等多种内容形式。预训练模型更具通用性,成为多才多艺、多面手的 Al模型,主要得益于多模型技术(Multimodal technology)的使用,即多模态表示图像、声音、语言等融合的机器学习。多模态技术推动了AIGC的内容多样性,让AIGC具有了更通用的能力。

从市场应用来看,OpenAI的ChatGPT4无疑处于绝对领先地位,从OpenAI发布会上透露信息来看,ChatGPT正式发布至今,周活跃用户数超过1亿人,目前有超过200万开发者和客户在该公司的API上进行开发,世界财富500强公司中,有92%的企业在使用其产品,即将快速创建定制ChatGPT的GPTs,实现了人人都能拥有大模型,引入性能更强的GPT-4 Turbo模型,直接支持128k上下文,知识库更新到今年4月,以及即将推出GPT Store应用商店,和新用于AI智能体的Assistants API等。

2. 国内AIGC应用发展现状

国内方面,随着百度“文心一言”、阿里通义千问、讯飞星火大模型、智谱AI的ChatGLM等纷纷发布,美团、百川智能、云知声、美图、腾讯等纷纷加入大模型赛道,一场围绕大模型的“军备竞赛”已趋白热化。

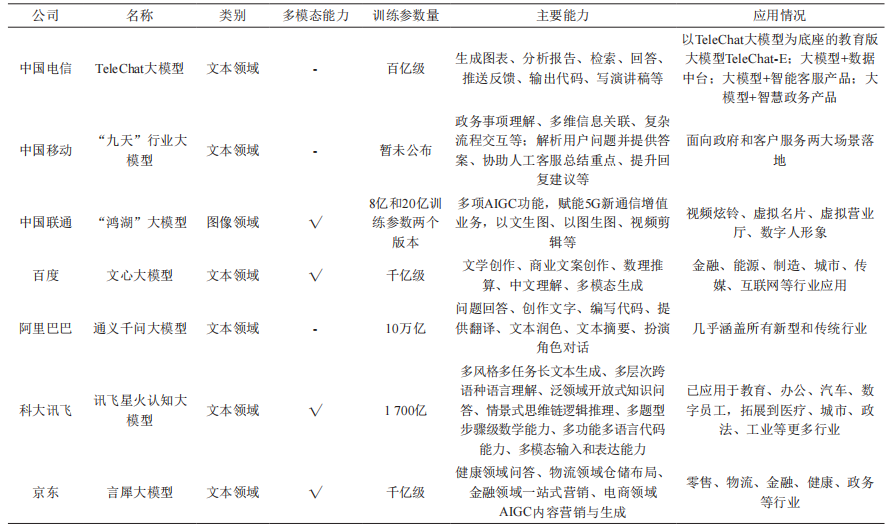

5月底,科技部新一代人工智能发展研究中心发布《中国人工智能大模型地图研究报告》,我国10亿参数规模以上的大模型已发布79个,几乎进入“百模大战”。作为通信运营商来说,面对着AIGC广泛的应用前景,为避免被管道化,迫切需要提前做好布局和卡位。基于此,国内三大运营商纷纷发布AI大模型:6月28日,中国联通在2023年上海世界移动通信大会期间发布了“鸿湖图文大模型1.0”的图文生成模型,可实现以文生图、视频剪辑、以图生图等功能;7月6日,在世界人工智能大会“算网一体·融创未来”分论坛上,中国电信正式对外发布大语言模型TeleChat,并展示了大模型赋能数据中台、智能客服和智慧政务三个方向的能力;7月8日,在2023年世界人工智能大会“大模型与深度行业智能”创新论坛上,中国移动正式发布九天·海算政务大模型和九天·客服大模型。根据当前最新AIGC应用发布情况,国内典型AIGC产品相应主要技术特征如表2所示。

表2 国内典型的AIGC类产品及其特点

从国内AIGC产品应用情况来看,相应产品正处于加速迭代状态,与国外的AIGC产品类似,逐步向新闻媒体、智慧城市、生物科技、智慧办公、影视制作、智能教育、智慧金融、智慧医疗、智慧工厂、生活服务等行业领域渗透,且逐步具备多模态能力,但受限于算力芯片封锁、算法不公开等因素影响,国内AIGC产品在能力输出上相较于国外类似产品还存在不小差距。根据量子位智库预测,2023-2025年中国AIGC产业处于培育摸索期,预计年均复合增速为25%,2026-2030年行业将迎来快速增长阶段,中国市场规模有望在2030年达到11 491亿元。 数字化转型网(www.szhzxw.cn)

二、AIGC的技术架构

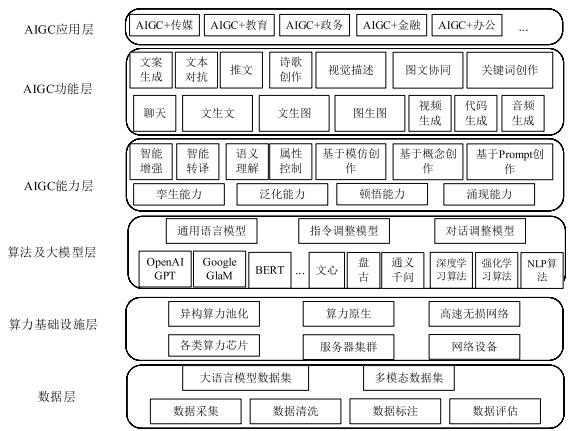

在现有生成式人工智能AIGC技术架构等基础上,但该架构相对较为粗放,未能完整涵盖AIGC技术核心要素,同时并未结合AIGC技术特征和应用情况进一步进行细化分层,基于此,在此基础上提出AIGC技术架构,以期能够更清晰了解AIGC技术特征,如图2所示,AIGC从技术架构上看主要包含6层:数据层、算力基础设施层、算法及大模型层、AIGC能力层、AIGC功能层和AIGC应用层。

(1)数据层

数据作为AIGC三大核心要素之一,数据层数据的质量、数量、多样性决定大模型的训练效果。数据集的构建主要通过数据采集、数据清洗、数据标注、模型训练、模型测试和产品评估进行产生。根据当前AIGC应用所涉及的大语言模型和多模态模型两种类型,数据集可分为大语言模型数据集和多模态数据集,大语言模型数据集主要来源于维基百科、网络百科全书、书籍、期刊、网页数据、搜索数据、B端行业数据以及一些专业数据库等;多模态数据集主要是基于两个及以上的模态形式(文本、图像、视频、音频等)组合形成的数据集。 数字化转型网(www.szhzxw.cn)

(2)算力基础设施层

算力基础设施层主要为大模型层提供算力底座,大模型所需的训练算力和推理算力由算力基础设施层提供。算力芯片主要包括图形处理器(GPU, Graphics Processing Unit)、张量处理器(TPU, Tensor Processing Unit)、神经网络处理器(NPU, Neural Processing Unit)以及各类智算加速芯片等。由于大模型训练所需的算力巨大且对时延要求较高,算力芯片一般都位于同一数据中心,采用高性能AI集群方式以保障算力需求,同时为降低服务器之间的延迟和带宽需求,需要采用专用InfiniBand 网络或基于融合以太网的远程直接数据存取(RoCE, Remote Direct Memory Access over Converged Ethernet)交换网络连接,形成高性能智算集群。专用InfiniBand网络架构相对封闭、运维复杂、价格昂贵,国外主要采用该技术,而国内主要以RoCE方式构建统一融合的网络架构,比如华为的超融合数据中心网络方案,使用独创的iLossLess智能无损算法,通过流量控制技术、拥塞控制技术和智能无损存储网络技术三大关键技术的相互配合,从而达到构建无损以太网络,助力实现AI场景下的数据中心网络“零丢包、低时延、高吞吐”目标。进一步地,考虑到各类算力芯片在不同行业应用的差异性,迫切需要构建异构的算力池,通过高效的调度算法、负载均衡算法等,提升算力的有效利用率,从而实现算力原生。

(3)算法及大模型层

当前,大模型采用的主要算法包括深度学习算法、强化学习算法和自然语言处理算法。深度学习算法主要包含卷积神经网络(CNN)和循环神经网络(RNN)两类,卷积神经网络(CNN)是常用于图像识别和计算机视觉任务的深度学习算法,循环神经网络(RNN)是常用于序列数据处理的深度学习算法。强化学习算法是大模型中用于决策和控制的重要算法,深度强化学习算法是结合深度学习和强化学习的优势,进一步拓展强化学习应用范围,常见的有Deep Q-Networks(DQN)、Deep Deterministic Policy Gradient(DDPG)、Proximal Policy Optimization(PPO)、Trust Region Policy Optimization(TRPO)等。自然语言处理算法主要包括文本分类算法、分词算法、命名实体识别算法、情感分析算法、机器翻译算法、问答系统算法、语音识别算法等。

大模型层主要基于算力基础设施提供的算力,面向通用、行业等构建大模型,通用大模型一般由技术实力较强的科技公司构建,而致力于构建专业或行业领域大模型的提供者,大多基于开源算法框架,以较少的通用数据结合高质量专业数据,以更低的成本训练出专业领域的大模型,相应大模型如第1节中相应的模型。 数字化转型网(www.szhzxw.cn)

一般情况下,大模型主要包含通用(或原始)语言模型、指令调整模型和对话调整模型三种类型:通用(或原始)语言模型通常使用大量的未标记文本进行训练,学习语言的统计规律,但没有特定的任务指向,模型经过训练,根据训练数据中的语言预测下一个词;指令调整模型是在通用语言模型的基础上,通过对特定任务数据的训练,使其能够对给定的指令做出适当的响应,这类模型可以理解和执行特定的语言任务,如问答、文本分类、总结、写作、关键词提取等,模型经过训练,以预测对输入中给出的指令的响应;对话调整模型是在通用语言模型的基础上,通过对对话数据的训练,使其能够进行对话。这类模型可以理解和生成对话,例如生成聊天机器人的回答,模型经过训练,以通过预测下一个响应进行对话。

(4)AIGC能力层

AIGC能力层主要表现在四个方面:孪生能力、泛化能力、顿悟能力和涌现能力。数字孪生是指利用模型和数据模拟还原出物理世界事物全生命周期的动态特征,并可双向评估,它是数字化的高级表达,数字孪生为AIGC核心能力,推动全行业降本增效,可有效与当前数字人结合为元宇宙创造有利条件。泛化能力是指AIGC大模型侧重于对知识的整合和转化,在不同内容生成任务中展现其普适性和通用性,涌现能力是指在大模型领域当模型突破某个规模时,性能显著提升,表现出让人惊艳、意想不到的能力,而顿悟能力是指经过多次的学习与训练突然爆发出的能力,一般情况下指降低原有参数规模和训练集基础上达成涌现能力。泛化能力、顿悟能力和涌现能力相互作用,能够有效提升AIGC的能力输出,通过算法调优等多重手段降低对参数量、训练数据集的要求,提升大模型在不同内容生成任务中的普适性和通用性。

(5)AIGC功能层

AIGC功能层主要基于不同的大模型能力,呈现多样性的技术场景,比如文生文、文生图、图生图、图文协同、视频生成、聊天机器人、音频生成、代码生成等多模态方式,通过构建这些技术场景以满足AIGC+行业、AIGC+通用等差异化的应用。

(6)AIGC应用层

AIGC应用层主要面向商业落地,根据各行各业的特点和需求,借助于AIGC功能层所提供的多样性技术场景进行适配,形成具有特色的AIGC应用产业,带动AIGC的发展与繁荣。一般来说,AIGC的应用主要包括基于生成式AI技术的创新应用和在现有基础上采用集成方式利用AI能力的传统应用。随着各类AIGC服务能力的逐步完善和开放,各行各业的应用开发者都可以调用AIGC服务能力开发各类领域的应用,应用生态将呈现百花齐放的局面。 数字化转型网(www.szhzxw.cn)

三、AIGC面临的机遇与挑战

从上述分析可知,大模型需要高昂的硬件成本、开发成本投入以及模型训练成本投入等,这无疑提高其门槛。截至目前,虽然我国在AIGC方面奋起直追,但距离发达国家在该方面的投入、应用能力、技术水平等多方面还存在较大差距。此外,大模型所需的GPU等算力芯片更新迭代较快,中美贸易战导致的芯片禁售与断供也对AIGC发展带来较多不利。面对这些困难和大量人力物力投入,然而大模型相应的商业盈利模式还未见清晰,机遇与风险并存,基于此,结合AIGC的技术架构特点,分别论述AIGC所面临的机遇和挑战。

1. AIGC带来的机遇

AIGC所带来的机遇主要包含以下几个方面:

(1)AIGC为数字经济注入新引擎

当前,全球经济越来越呈现数字化特征,人类社会正在进入以数字化为主要标志的新阶段。数字经济,已经成为世界的主要经济形态,也成为推动我国经济社会发展的核心动力。AIGC作为一种利用人工智能模型创建数字内容的技术,它的出现让自动化生成数据成为可能,它可以把隐性的知识转变成显性的知识,这也意味着数据资产可以实现指数级的增长、极大地改变企业数据资产的生成方式与构成,进而推动IT跟业务的融合、提高工作效率、实现创新升级,AIGC将成为数字内容创新发展的新引擎。

Gartner预计,到2025年,生成式人工智能将占所有生成数据的10%,而目前由人工智能生成的数据占所有数据的比例不到1%,具有较大的潜力。根据麦肯锡最近发布的一份报告预估,AIGC能每年为全球经济增加4.4万亿美元。此外,据艾媒咨询数据显示,预计2023年中国AIGC行业核心市场规模为79.3亿元,2028年将达2 767.4亿元。因此,一方面数字经济为AIGC提供了广阔的发展空间,另一方面,AIGC为数字经济提供了技术支持,AIGC的广泛应用已经成为数字经济的新趋势。 数字化转型网(www.szhzxw.cn)

随着硬件的升级和算法改进优化,AIGC的性能将越来越强大,AIGC模型体量/复杂度将极大提高,相应内容孪生、内容编辑、内容创作三大基础能力将显著增强,产品类型将逐渐丰富,应用领域将更加广泛。

(2)AIGC催生新的软件服务模式

由于大模型需要大算力、电力能源消耗、高精技术人才投入等,门槛较高,一般企业难以构建自己的大模型,因此,AIGC催生出新的软件服务模式—模型即服务MaaS(Model as a Service),MaaS模式是以机器学习和人工智能模型为基础,通过API(应用程序编程接口)提供这些模型的服务,这意味着在云端,开发者可以通过简单的API调用来使用这些模型的功能,而不需要自己构建和训练模型。这样一来,企业开发应用程序的效率大大提高,大大节省了时间和资源。

于此同时,AIGC领域的发展也需要一个具有开放性和可扩展性的平台支持。MaaS模式为AIGC领域提供了更加开放和标准化的平台,使得更多的应用程序可以接入和使用这些模型服务。这一模式的出现,为行业内的企业带来了更加便捷、灵活和可扩展的服务。通过利用MaaS模式,企业可以更加专注于应用程序开发,无需过多关注模型的构建和训练。这将使企业能够更快地推出新的应用程序,提供更好的产品和服务。MaaS模式将成为未来AIGC领域的重要接口,人工智能模型的开发、部署和应用将变得更加便捷、灵活和可扩展。 数字化转型网(www.szhzxw.cn)

2023年9月8日,腾讯全球数字生态大会互联网AIGC应用专场上,腾讯云正式发布AIGC全栈解决方案,可为企业提供可信、可靠、可用的AIGC全链路内容服务,助力企业探索AGI之路,抓住AI 2.0时代发展新机遇。

(3)AIGC为通信行业带来新的发展机遇

随着AI大模型等人工智能技术的发展,对算力需求将显著增长,尤其是智算算力的需求激增,因此,相应智算网络规划、智算中心布局和数据处理能力显得尤为重要。面对当前日益严峻的国际形势和逆全球化发展趋势下,作为通信运营商来说,本身具有强大的基础设施网络和数据中心,无论是算力资源还是数据资源都较为丰富,同时具有良好的国家队基因优势和资金优势,可以站在国家利益高度角度出发,在国家的顶层规划和产业支持下,联合科研院所、产业链等的力量,逐步建立并筑牢该产业链链长,加强在人工智能大模型基础理论和应用上的投入,集中技术和资金优势共同开展关键问题攻关并实现突破,跟上或是达到世界领先水平,在数字经济中担负更大的使命。

在AIGC产业中,运营商有望深度参与公共数据加工环节,一种模式是借助隐私计算技术和模式创新,提供大模型训练、推理阶段的数据产品;另一种模式是合成相应数据以满足未来大模型快速增长的参数量,构建大模型训练数据的监督体系。最后,运营商还可围绕行业智能的迭代升级需求,提供AI+泛行业解决方案,为企业创造新的增长点。 数字化转型网(www.szhzxw.cn)

2. AIGC带来的挑战

AIGC所带来的挑战主要包括如下几个方面:

(1)对版权保护、社会规范、伦理道德等带来较大威胁

在版权保护方面,AIGC的飞速发展和商业化应用,除了对创作者造成冲击外,也对大量依靠版权为主要营收的企业带来较大冲击。在伦理道德方面,部分开源的AIGC项目对生成的图像、文本、视频、音频等监管程度较低,进行AI训练的数据集存在侵权等潜在风险,可利用AIGC生成虚假名人照片等违禁图片,甚至会制作出暴力、不雅等相应视频和图像,由于AI本身尚不具备价值判断能力,相关法律法规仍处于真空。

此外,如何进行数据脱敏并有效利用,充分发挥数据资源的价值,都将面临较大挑战。针对上述问题,2023年7月13日,国家网信办等七部门联合发布《生成式人工智能服务管理暂行办法》,以坚持发展和安全并重、促进创新和依法治理相结合的原则,采取有效措施鼓励AIGC创新发展,成为国家首次针对于当下爆火的AIGC产业发布规范性政策,为上述问题提供了相应规范和要求,但相应具体的手段和方法仍需进一步挖掘和完善。 数字化转型网(www.szhzxw.cn)

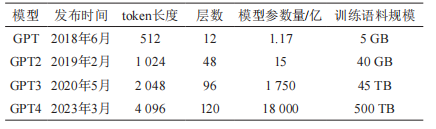

(2)算力资源增长与能耗的带来较大挑战

根据OpenAI研究结果,AI训练所需算力指数增长速率远超硬件的摩尔定律,在当前大模型参数量和数据集急速增长的情况下,所需的网络带宽和算力资源需求将呈指数级增长。大模型一般包含训练和推理两个部分。针对训练部分,根据OpenAI发表的论文中对训练算力计算,一般情况下,训练阶段算力需求与模型参数量、训练数据集规模等相关,且为二者乘积的6倍,以GPT为例,相应参数规模如表3所示:

表3 GPT产品演进各个版本的参数规模

根据表3所述,GPT-3参数约为1 750亿,训练语料规模(训练数据集)为45 TB,折合成训练集约为3 000亿tokens,则GPT-3训练阶段算力需求=6*1.75*1011*3*1011=3.15*1023FLOPS=3.15*108 PFLOS,折合成算力为3646 PFLOS-day。在实际过程中,GPU算力除用于模型训练外,还需处理通信、数据存取等任务,实际所需算力远大于理论计算值。 数字化转型网(www.szhzxw.cn)

根据给出的结论,OpenAI训练GPT-3采用英伟达1万块V100 GPU,有效算力比率为21.3%,GPT-3的实际算力需求应为1.48×109 PFLOPS(17 117 PFLOPS-day)。假设以单机搭载16片V100 GPU的英伟达DGX2服务器承载GPT-3训练,该服务器AI算力性能为2 PFLOPS,最大功率为10 kW,则训练阶段需要8559台服务器同时工作1日,在电源使用效率PUE(Power Usage Effectiveness)为1.2时总耗电量约为2 464 992 kWh,相当于302.94吨标准煤,对应二氧化碳排放量745.25吨。从训练成本来说,OpenAI训练GPT-4的FLOPS约为2.15*e 25,在大约25 000个A100上训练了90到100天,GPU利用率预估在32%到36%之间,训练的成本大约是6 300万美元。

针对推理部分,按照OpenAI提供的计算方法,推理阶段算力需求是模型参数量与训练数据集规模的2倍。根据表3所述,每轮对话最大产生2 048 tokens,则每轮对话产生的推理算力需求=2*1.75*1011*2048=7.168*1014=0.716 8 PFLOS,按照ChatGPT每日2500万访问量,假设每账号每次发生10论对话,则每日对话产生推理算力需求=0.7168*2.5*107*10=1.792*107 PFLOS。

同样地,假定推理的有效算力比率为30%,则每日对话产生推理算力需求为5.973*107 PFLOS。按照上述方法测算,ChatGPT每日对话需要338台服务器同时工作,每日耗电约为52 728 kWh。从上述分析可知,在当前“双碳”背景下,如何突破技术和成本的双重压力满足AIGC带来的高算力需求,如何应对AIGC构建与应用过程中所带来的算力洪峰等问题都需要深入思考和解决。 数字化转型网(www.szhzxw.cn)

(3)构建健壮性的智算网络及调度平台面临较大挑战

由于算力资源的异构特性,针对智算集群,如何通过智算网络实现高效连接、调度平台进行高效配置等将决定其有效算力比率。智算平台要求节点之间以极低时延(微秒级)、高带宽(百GB以上)传输海量的数据信息,以保证多个节点按照统一的步调分工协作完成相应任务。同时,智算中心等算力提供方所处位置区域、算力成本、能够提供的资源类型等存在差异,如何将适合的计算资源分配给需求方也显得尤为关键,同时也对算力的统一调度提出较大挑战。

当前,运营商提出算力网络概念,并在相关技术上取得一系列突破,但在算力调度、算力路由、算网一体等方面还需深入研究,以更好适配和满足AIGC技术架构,提升其有效算力比率和数据流通效率等,构建面向国内的智算网络和调度平台将是未来需要深入研究的课题之一。

四、结束语

ChatGPT的出现,加速了AIGC技术和应用的发展。AIGC作为是人工智能1.0时代进入2.0时代的重要标志,已经为人类社会打开了认知智能的大门,它具有非常广泛的应用前景和市场潜力,可以为人类社会带来更多的便利和创新,同时,也要看到AIGC存在诸多挑战和面临的各类风险问题。随着深度学习、NLP等技术的不断进步和融合创新,相信AIGC技术将会在未来数字经济中扮演越来越重要的角色,成为人工智能技术应用的重要组成部分。 数字化转型网(www.szhzxw.cn)

翻译:

This paper discusses the application progress of generative artificial intelligence (AIGC)

Artificial Intelligence Generated Content (AIGC) refers to a technology that generates relevant content with appropriate generalization ability through the learning and recognition of existing data based on artificial intelligence techniques such as generating adversarial networks and large pre-trained models. The core idea of AIGC technology is to use artificial intelligence algorithms to generate content with certain creativity and quality. By training the model and learning from large amounts of data, AIGC can generate content related to the input conditions or instructions.

The development of AIGC can be traced back to 1950 when Alan Turing proposed the famous “Turing test” in his paper Computing Machinery and Intelligence. Gives a test method to determine whether a machine is “intelligent,” that is, whether a machine can “generate” content by imitating the way humans think, In 1957, Lejaren Hiller and Leonard saacson completed the first computer-composed musical composition in human history, which can be seen as the beginning of AIGC. After more than half a century of rapid development, with the accumulation of valuable and effective data, the substantial improvement of computing power, and the proposal and application of deep learning algorithms, today’s artificial intelligence technology has been popularized in many industries, and plays an important role in the corresponding scenarios. For example, Natural Language Processing (NLP), computer vision, recommendation system, predictive analysis, etc., subvert the production and life mode in the corresponding scene, and play an important role in bringing great convenience to human society.

From the perspective of AIGC engineering application, this paper discusses the development status and trend of AIGC at home and abroad. On this basis, the corresponding current AIGC application products at home and abroad are classified, and AIGC technical architecture is introduced in detail. Finally, the opportunities and challenges faced by AIGC are given. 数字化转型网(www.szhzxw.cn)

Development status of AIGC represented by ChatGPT

As the ChatGPT craze continues to heat up, according to 51GPT, there are currently 2,722 AIGC applications around the world. The continuous emergence of these tools has further accelerated the commercial landing of AIGC, making the industrial ecological chain gradually improve. As shown in Figure 1, at present, the main modes of AIGC products include audio generation, video generation, text generation, image generation, and cross-modal generation among images, video and text. The following are from foreign and domestic two aspects of AIGC application development status and trend.

Figure 1 AIGC applied modal classification

1. AIGC application development status abroad

Based on relevant literature and research reports, major foreign AIGC head application products are classified according to text, image, audio and video, and the corresponding representative products are shown in Table 1.

Table 1 Typical AIGC products and their characteristics abroad

As can be seen from Table 1, foreign AIGC application products are mainly in the United States, and most of the products have multi-modal capabilities, which is also an important direction for the evolution and landing of current AIGC products. From a technical point of view, the accumulation and integration of AI technologies such as generation algorithms, pre-training models, and multi-modes have given birth to the explosion of AIGC. Generation algorithms mainly include Transformer, Flow-based models, Diffusion Model and other deep learning algorithms. 数字化转型网(www.szhzxw.cn)

According to the basic types, pre-training models mainly include: (1) NLP pre-training models, such as Google’s LaMDA and PaLM, Open AI’s GPT series; (2) Computer Vision (CV) pre-trained models such as Microsoft’s Florence; (3) Multi-modal pre-training model, that is, the integration of text, pictures, audio and video and other forms of content. The pre-trained model is more versatile, becoming a versatile and versatile Al model, mainly thanks to the use of Multimodal technology (multimodal), that is, multi-modal representation of images, sound, language and other integration of machine learning. Multimodal technology promotes the diversity of AIGC content and makes AIGC more versatile.

From the perspective of market applications, OpenAI’s ChatGPT4 is undoubtedly in an absolute leading position. From the information revealed at the OpenAI conference, ChatGPT has been officially released since then, with more than 100 million weekly active users, and more than 2 million developers and customers are currently developing on the company’s API. With 92% of enterprises using its products, it will quickly create customized ChatGPT GPTs, enable everyone to have a large model, introduce a more powerful GPT-4 Turbo model, direct support for 128k context, update the knowledge base to April this year, and the upcoming launch of the GPT Store app Store. And new Assistants apis for AI agents.

2. Domestic AIGC application development status

In domestic terms, with the release of Baidu’s “Wenxin Word”, Ali Yi thousand questions, News Fly Spark big model, wisdom spectrum AI ChatGLM, etc., Meituan, Baichuan intelligence, Cloud sound, Meitu, Tencent, etc., have joined the big model track, and an “arms race” around the big model has become increasingly heated. 数字化转型网(www.szhzxw.cn)

At the end of May, the new Generation of artificial intelligence Development Research Center of the Ministry of Science and Technology released the “China Artificial Intelligence large model Map Research Report”, and 79 large models above the scale of 1 billion parameters have been released in China, almost entering the “100 model war”. As a communication operator, facing the wide application prospect of AIGC, in order to avoid being pipelined, it is urgent to do the layout and card position in advance. Based on this, the three major domestic operators have released AI grand models: on June 28, China Unicom released the “Honghu Grand Model 1.0” graphic generation model during the 2023 Shanghai Mobile World Congress, which can realize the functions of Vincennes, video clips, and pictures. On July 6, at the sub-forum of “Computing and Network Integration · SunAC Future” of the World Artificial Intelligence Conference, China Telecom officially released the large language model TeleChat, and demonstrated the ability of the large model to empower the data center, intelligent customer service and smart government; On July 8, at the innovation forum of “Large Model and Deep Industry Intelligence” at the 2023 World Artificial Intelligence Conference, China Mobile officially released the Jiutian · Haiyuan government Affairs large model and the Jiutian · Customer service large model. According to the latest AIGC application release, the corresponding main technical characteristics of typical domestic AIGC products are shown in Table 2.

Table 2 Typical domestic AIGC products and their characteristics

From the perspective of the application of domestic AIGC products, the corresponding products are in an accelerated state of iteration, similar to foreign AIGC products, and gradually penetrate into news media, smart city, biotechnology, smart office, film and television production, smart education, smart finance, smart medical care, smart factory, life services and other industries, and gradually have multi-modal capabilities. However, due to the influence of factors such as the blocking of computing power chips and the non-disclosure of algorithms, there is still a big gap in the capacity output of domestic AIGC products compared with similar foreign products. According to the Qubit think tank forecast, 2023-2025 China’s AIGC industry is in the cultivation and exploration period, the annual compound growth rate is expected to be 25%, 2026-2030 the industry will usher in a rapid growth stage, China’s market size is expected to reach 1,149.1 billion yuan in 2030. 数字化转型网(www.szhzxw.cn)

Technical architecture of AIGC

On the basis of the existing generative artificial intelligence AIGC technical architecture, but the architecture is relatively extensive and fails to fully cover the core elements of AIGC technology, and does not further refine and stratification in combination with AIGC technical characteristics and applications. Based on this, the AIGC technical architecture is proposed on this basis, in order to better understand the technical characteristics of AIGC. As shown in Figure 2, AIGC mainly consists of six layers from the perspective of technical architecture: data layer, computing infrastructure layer, algorithm and large model layer, AIGC capability layer, AIGC function layer and AIGC application layer.

Figure 2 AIGC technical architecture

(1) Data layer

Data is one of the three core elements of AIGC, and the quality, quantity and diversity of data in the data layer determine the training effect of large models. The construction of data set is mainly generated by data acquisition, data cleaning, data annotation, model training, model testing and product evaluation. According to the two types of large language model and multi-modal model involved in the current AIGC application, the data sets can be divided into large language model data set and multi-modal data set. The large language model data set mainly comes from Wikipedia, web encyclopedias, books, journals, web data, search data, B-side industry data and some professional databases. Multimodal data sets are mainly data sets based on the combination of two or more modal forms (text, image, video, audio, etc.).

(2) Computing infrastructure layer

The computing infrastructure layer mainly provides the computing base for the large model layer, and the training and reasoning computing power required by the large model are provided by the computing infrastructure layer. Computing power chips mainly include Graphics processor (GPU), Graphics Processing Unit (GPU), Tensor Processing Unit (TPU), neural network processor (NPU), Neural Processing Unit and various kinds of intelligent computing acceleration chips. Due to the huge computing power required for large model training and high delay requirements, computing chips are generally located in the same data center, and high-performance AI clustering is adopted to ensure computing power requirements. At the same time, in order to reduce the delay and bandwidth requirements between servers, A dedicated InfiniBand network or Remote Direct Memory Access over Converged Ethernet (RoCE) switching network is required to form a high-performance intelligent computing cluster. Dedicated InfiniBand network architecture is relatively closed, complex, and expensive. Foreign countries mainly use this technology, while domestic countries mainly use RoCE to build unified and integrated network architecture, such as Huawei’s hyper-converged data center network solution, which uses the original iLossLess intelligent lossless algorithm. Through the cooperation of the three key technologies of flow control technology, congestion control technology and intelligent lossless storage network technology, we can build lossless Ethernet network and help realize the goal of “zero packet loss, low delay and high throughput” of data center network in AI scenario. Further, considering the differences in the application of various types of computing power chips in different industries, it is urgent to build a heterogeneous computing power pool, and improve the effective utilization of computing power through efficient scheduling algorithms and load balancing algorithms, so as to achieve native computing power.

(3) Algorithm and large model layer

At present, the main algorithms used in large models include deep learning algorithms, reinforcement learning algorithms and natural language processing algorithms. Deep learning algorithms mainly include convolutional neural network (CNN) and recurrent neural network (RNN). Convolutional neural network (CNN) is a deep learning algorithm commonly used in image recognition and computer vision tasks, and recurrent neural network (RNN) is a deep learning algorithm commonly used in sequence data processing. Reinforcement learning algorithm is an important algorithm for decision-making and control in large models. Deep reinforcement learning algorithm combines the advantages of deep learning and reinforcement learning to further expand the application range of reinforcement learning. Common examples include Deep Q-Networks (DQN), Deep Deterministic Policy Gradient (DDPG), Proximal Policy Optimization (PPO), and Trust Region Policy Optimization (TRPO), etc. Natural language processing algorithms mainly include text classification algorithm, word segmentation algorithm, named entity recognition algorithm, sentiment analysis algorithm, machine translation algorithm, question answering system algorithm, speech recognition algorithm and so on.

The large model layer is mainly based on the computing power provided by the computing power infrastructure to build large models for general purpose and industries, etc. General large models are generally built by technology companies with strong technical strength, while providers dedicated to building large models for professional or industrial fields are mostly based on open source algorithm framework, combining less general data with high-quality professional data. To train large models of specialized areas at a lower cost, such as the corresponding models in Section 1.

In general, large models mainly include three types: general (or original) language model, instruction adjustment model and conversation adjustment model. General (or original) language model is usually trained with a large number of unlabeled texts to learn the statistical rules of language, but there is no specific task orientation. After training, the model predicts the next word according to the language in the training data. Instruction adjustment model is based on the general language model, through the training of specific task data, so that it can make appropriate response to given instructions, such as understanding and executing specific language tasks, such as question and answer, text classification, summary, writing, keyword extraction, etc., the model is trained to predict the response to the instructions given in the input; Dialogue adjustment model is based on the general language model, through the training of dialogue data, it can make dialogue. This type of model can understand and generate conversations, such as generating chatbot responses, and the model is trained to conduct conversations by predicting the next response. 数字化转型网(www.szhzxw.cn)

(4) AIGC capability layer

AIGC ability layer is mainly manifested in four aspects: twinning ability, generalization ability, insight ability and emergence ability. Digital twin refers to the use of models and data simulation to restore the dynamic characteristics of the whole life cycle of things in the physical world, and can be evaluated in both ways, it is a high-level expression of digitalization, digital twin is the core competence of AIGC, promote the whole industry to reduce costs and increase efficiency, and can effectively combine with the current digital people to create favorable conditions for the meta-universe. Generalization ability means that AIGC large model focuses on the integration and transformation of knowledge, and shows its universality and versatility in different content generation tasks; emergence ability means that when the model breaks through a certain scale in the field of large model, its performance is significantly improved, showing amazing and unexpected ability; and insight ability refers to the ability that suddenly bursts out after multiple learning and training. In general, it refers to the emergence ability achieved on the basis of reducing the original parameter size and training set. The interaction of generalization ability, insight ability and emergence ability can effectively improve the ability output of AIGC, reduce the requirements on the number of parameters and training data sets by multiple means such as algorithm tuning, and improve the universality and versatility of large models in different content generation tasks.

(5) AIGC functional layer

AIGC functional layer is mainly based on different large model capabilities, presenting a variety of technical scenarios, such as Wensheng, Wensheng diagram, Tu-sheng diagram, graphic collaboration, video generation, chatbot, audio generation, code generation and other multi-modal modes, through the construction of these technical scenarios to meet the AIGC+ industry, AIGC+ general and other differentiated applications. 数字化转型网(www.szhzxw.cn)

(6) AIGC application layer

The AIGC application layer is mainly oriented towards commercial landing. According to the characteristics and needs of all walks of life, the AIGC application layer is adapted with the help of the diversity of technical scenarios provided by the AIGC functional layer to form a characteristic AIGC application industry and drive the development and prosperity of AIGC. In general, AIGC applications include innovative applications based on generative AI technologies and traditional applications that leverage AI capabilities in an integrated manner on top of existing ones. With the gradual improvement and opening of various AIGC service capabilities, application developers in all walks of life can call AIGC service capabilities to develop applications in various fields, and the application ecology will show a situation of blooming.

Opportunities and challenges facing AIGC

From the above analysis, it can be seen that large models require high hardware costs, development costs and model training costs, which undoubtedly raises its threshold. Up to now, although China is catching up in AIGC, there is still a big gap between developed countries in terms of investment, application ability and technical level in this area. In addition, the GPU and other computing power chips required for large models are updated faster, and the chip ban and supply cut caused by the Sino-US trade war also bring more disadvantages to the development of AIGC. In the face of these difficulties and a large amount of manpower and material resources investment, however, the corresponding business profit model of the large model has not been clear, and opportunities and risks coexist. Based on this, combined with the characteristics of the technical architecture of AIGC, the opportunities and challenges faced by AIGC are discussed respectively. 数字化转型网(www.szhzxw.cn)

1. Opportunities brought by AIGC

The opportunities presented by AIGC include the following:

(1) AIGC injects new engines into the digital economy

At present, the global economy is showing more and more digital characteristics, and human society is entering a new stage with digital as the main symbol. Digital economy has become a major economic form in the world and a core driving force for China’s economic and social development. AIGC, as a technology that uses artificial intelligence models to create digital content, makes it possible to automatically generate data, which can transform tacit knowledge into explicit knowledge, which also means that data assets can achieve exponential growth and greatly change the generation method and composition of enterprise data assets. In order to promote the integration of IT and business, improve work efficiency, and achieve innovation and upgrading, AIGC will become a new engine for the innovation and development of digital content. 数字化转型网(www.szhzxw.cn)

Gartner expects generative AI to account for 10% of all data generated by 2025, up from less than 1% of all data generated by AI today, with greater potential. According to a recent McKinsey report, AIGC could add $4.4 trillion to the global economy annually. In addition, according to IIMedia Consulting data show that the core market size of China’s AIGC industry is expected to be 7.93 billion yuan in 2023, and will reach 276.74 billion yuan in 2028. Therefore, on the one hand, the digital economy provides a broad development space for AIGC, on the other hand, AIGC provides technical support for the digital economy, and the wide application of AIGC has become a new trend of the digital economy.

With the upgrading of hardware and algorithm improvement and optimization, the performance of AIGC will become more and more powerful, the size/complexity of AIGC model will be greatly improved, the corresponding content twin, content editing, content creation three basic capabilities will be significantly enhanced, product types will gradually enrich, and application fields will be more extensive.

(2) AIGC creates a new software service model

Because large models require large computing power, electricity and energy consumption, and high-precision technical talent investment, the threshold is high, and it is difficult for general enterprises to build their own large models. Therefore, AIGC has given rise to a new software Service Model – Model as a Service (MaaS), which is based on machine learning and artificial intelligence models. These models are served through apis (application programming interfaces), which means that in the cloud, developers can use the functionality of these models with simple API calls, rather than having to build and train the models themselves. In this way, enterprises can greatly improve the efficiency of application development, and greatly save time and resources.

At the same time, the development of AIGC field also needs an open and scalable platform support. The MaaS pattern provides a more open and standardized platform for the AIGC domain, allowing more applications to access and use these model services. The emergence of this model has brought more convenient, flexible and scalable services to enterprises in the industry. By leveraging the MaaS pattern, companies can focus more on application development without much focus on building and training models. This will enable businesses to launch new applications faster and offer better products and services. The MaaS pattern will become an important interface in the future AIGC field, and the development, deployment and application of AI models will become more convenient, flexible and scalable. 数字化转型网(www.szhzxw.cn)

On September 8, 2023, at the Internet AIGC application session of Tencent Global Digital Ecology Conference, Tencent Cloud officially released AIGC full-stack solution, which can provide enterprises with credible, reliable and available AIGC full-link content services, help enterprises explore the road to AGI, and seize new opportunities for the development of AI 2.0 era.

(3) AIGC brings new development opportunities to the communications industry

With the development of AI large model and other artificial intelligence technology, the demand for computing power will increase significantly, especially the demand for intelligent computing power surge, therefore, the corresponding intelligent network planning, intelligent computing center layout and data processing capabilities are particularly important. In the face of the increasingly severe international situation and the development trend of anti-globalization, as a communication operator, it has a strong infrastructure network and data center, rich in both computing power and data resources, and has a good genetic and financial advantage of the national team, which can stand in the perspective of national interests. With the top-level planning and industrial support of the state, joint research institutes, industrial chain and other forces, gradually establish and build the industrial chain length, strengthen the investment in the basic theory and application of artificial intelligence large model, concentrate technology and capital advantages to jointly carry out key issues and achieve breakthroughs, keep up with or reach the world’s leading level, and shoulder a greater mission in the digital economy.

In the AIGC industry, operators are expected to deeply participate in public data processing. One model is to provide data products at the stage of large-scale model training and inference with the help of privacy computing technology and model innovation. The other model is to synthesize the corresponding data to meet the rapidly increasing number of parameters of the future large model, and construct the supervision system of the training data of the large model. Finally, operators can also focus on the iterative upgrading needs of industry intelligence and provide AI+ pan-industry solutions to create new growth points for enterprises. 数字化转型网(www.szhzxw.cn)

2. Challenges posed by AIGC

The challenges posed by AIGC include the following:

(1) It poses a great threat to copyright protection, social norms, ethics, etc

In terms of copyright protection, the rapid development and commercial application of AIGC not only has an impact on creators, but also has a great impact on a large number of enterprises that rely on copyright as their main revenue. In terms of ethics and morality, some open source AIGC projects have a low degree of supervision on the generated images, texts, videos, audio, etc., and the data sets for AI training have potential risks such as infringement. AIGC can be used to generate illegal pictures such as fake celebrity photos, and even produce corresponding videos and images such as violence and indecency. Laws and regulations remain a vacuum. 数字化转型网(www.szhzxw.cn)

In addition, how to conduct data desensitization and effective use, give full play to the value of data resources, will face greater challenges. In response to the above problems, on July 13, 2023, the National Cyberspace Administration and other seven departments jointly issued the Interim Measures for the Management of Generative Artificial Intelligence Services, in order to adhere to the principle of attaching equal importance to development and safety, promoting innovation and combining governance according to law, and taking effective measures to encourage the innovative development of AIGC. It has become the first time for the country to issue normative policies for the current hot AIGC industry, providing the corresponding norms and requirements for the above issues, but the corresponding specific means and methods still need to be further explored and improved.

(2) The growth of computing resources and energy consumption bring great challenges

According to the research results of OpenAI, the exponential growth rate of computing power required for AI training far exceeds Moore’s Law of hardware, and the required network bandwidth and computing resource demand will increase exponentially in the case of the rapid growth of the number of large model parameters and data sets. Large models generally contain two parts: training and reasoning. For the training part, according to the calculation of the training computing power in the paper published by OpenAI, in general, the computing power demand in the training stage is related to the number of model parameters and the size of the training data set, and is 6 times the product of the two. Taking GPT as an example, the corresponding parameter scale is shown in Table 3:

Table 3 Parameter scale of each version of GPT product evolution

According to Table 3, the parameters of GPT-3 are about 175 billion, the training corpus size (training data set) is 45 TB, which is converted into about 300 billion tokens. Then, the computing power requirement of GPT-3 training stage =61.75101131011=3.151023FLOPS=3.15108 PFLOS, which is equivalent to 3646 pFLOs-day. In the actual process, GPU computing power is not only used for model training, but also needs to deal with communication, data access and other tasks, and the actual computing power required is much higher than the theoretical calculation value.

According to the conclusions given, OpenAI trains GPT-3 using 10,000 V100 Gpus from Nvidia, with an effective computing power ratio of 21.3%, and the actual computing power requirement of GPT-3 should be 1.48×109 PFLOPS (17 117 PFLOPS per day). Assuming that a single NVIDIA DGX2 server equipped with 16 V100 Gpus carries GPT-3 training, the AI computing performance of the server is 2 PFLOPS and the maximum power is 10 kW, 8559 servers are required to work simultaneously for 1 day during the training phase. When the Power Usage Effectiveness (PUE) is 1.2, the total power consumption is about 2 464,992 kWh, equivalent to 302.94 tons of standard coal, corresponding to 745.25 tons of carbon dioxide emissions. In terms of training cost, OpenAI trains GPT-4 with approximately 2.15*e 25 FLOPS, 90 to 100 days of training on approximately 25,000 A100s, GPU utilization is estimated to be between 32% and 36%, and the training cost is approximately $63 million.

For the inference part, according to the calculation method provided by OpenAI, the computing power requirement of the inference stage is twice the number of model parameters and the size of training data set. According to Table 3, each round of dialogue generates a maximum of 2048 tokens, then the reasoning computing power demand generated by each round of dialogue =21.7510112048=7.1681014=0.716 8 PFLOS, according to ChatGPT 25 million visits per day, assuming that each account has 10 dialogues. The daily conversation generates inference computing power requirements =0.71682.510710=1.792107 PFLOS. 数字化转型网(www.szhzxw.cn)

Similarly, assuming that the effective power ratio for inference is 30%, the daily conversation generates inference power requirements of 5.973*107 PFLOS. According to the above calculation, the ChatGPT daily conversation requires 338 servers to work simultaneously, and the daily power consumption is about 52,728 kWh. From the above analysis, it can be seen that under the current “dual-carbon” background, how to break through the dual pressure of technology and cost to meet the high computing power demand brought by AIGC, how to deal with the computing power peak brought by the construction and application of AIGC and other problems need to be deeply thought and solved.

(3) Building a robust intelligent computing network and scheduling platform faces great challenges

Due to the heterogeneous characteristics of computing resources, the effective computing power ratio of intelligent computing cluster is determined by how to achieve efficient connection through intelligent computing network and efficient configuration of scheduling platform. The intelligent computing platform requires nodes to transmit massive data information with extremely low delay (microsecond level) and high bandwidth (more than 100 GB), so as to ensure that multiple nodes cooperate to complete corresponding tasks in a unified pace. At the same time, there are differences in the location of computing power providers such as intelligent computing centers, computing power costs, and resource types that can be provided, so how to allocate suitable computing resources to the demand side is particularly critical, and it also poses a great challenge to the unified scheduling of computing power.

At present, operators have proposed the concept of computing power network and made a series of breakthroughs in related technologies, but further studies are needed in computing power scheduling, computing power routing, computing network integration, etc., in order to better adapt to and meet the AIGC technical architecture and improve its effective computing power ratio and data flow efficiency. The construction of domestic intelligent computing network and scheduling platform will be one of the issues that need to be further studied in the future. 数字化转型网(www.szhzxw.cn)

Concluding remarks

The emergence of ChatGPT accelerated the development of AIGC technology and applications. As an important symbol of the 1.0 era of artificial intelligence into the 2.0 era, AIGC has opened the door to cognitive intelligence for human society. It has a very wide range of application prospects and market potential, and can bring more convenience and innovation to human society. At the same time, it is necessary to see that AIGC has many challenges and various types of risk problems. With the continuous progress and integrated innovation of deep learning, NLP and other technologies, it is believed that AIGC technology will play an increasingly important role in the future digital economy and become an important part of the application of artificial intelligence technology.

本文由数字化转型网(www.szhzxw.cn)转载而成,来源于南方都市报;编辑/翻译:数字化转型网宁檬树。

免责声明: 本网站(http://www.szhzxw.cn/)内容主要来自原创、合作媒体供稿和第三方投稿,凡在本网站出现的信息,均仅供参考。本网站将尽力确保所提供信息的准确性及可靠性,但不保证有关资料的准确性及可靠性,读者在使用前请进一步核实,并对任何自主决定的行为负责。本网站对有关资料所引致的错误、不确或遗漏,概不负任何法律责任。

本网站刊载的所有内容(包括但不仅限文字、图片、LOGO、音频、视频、软件、程序等) 版权归原作者所有。任何单位或个人认为本网站中的内容可能涉嫌侵犯其知识产权或存在不实内容时,请及时通知本站,予以删除。