数字化转型网人工智能专题

与全球关注人工智能的顶尖精英一起学习!数字化转型网建立了一个专门讨论人工智能技术、产业、学术的研究学习社区,与各位研习社同学一起成长!欢迎扫码加入!

2024年7月12日,欧盟官方公报正式公布《人工智能法》(AI Act)。这项具有里程碑意义的立法包含180个序言、113条条款和13个附件,为欧盟境内人工智能系统的开发、部署和使用建立了全面的框架。该法将在公布20天后,即当地时间8月1日,北京时间8月2日正式生效,并在立法上采取了分阶段生效和基于风险的方法。 数字化转型网(www.szhzxw.cn)

一、分阶段实施

●2024年11月2日之前

各成员国指定人工智能监管机构

●2025年2月2日开始

法案的一般规定和禁令规定将会生效,针对社会信用排名系统等具有“不可接受的风险”的人工智能的禁令清单开始适用,并将于法律颁布实施后的六个月内开始执行

●2025年5月2日之前

欧盟人工智能办公室将会发布行为准则

●2025年8月2日开始

通用人工智能模型(GPAI)相关规定开始生效,提供者将须履行透明度等义务

●2026年2月2日之前

欧盟委员会需要提出建立后市场监测计划模板,并列出计划所列要素清单

●2026年8月2日之前

法案将会全面生效,即法案内其他内容都将开始适用。包括附件三(高风险人工智能系统列表)中定义的高风险人工智能系统的全部义务。与治理和符合评估系统相关的基础设施应投入运行,成员国应在国家一级至少建立一个人工智能监管沙盒,该沙盒最迟应在2026年8月2日投入使用

●2027年8月2日之前

对于高风险人工智能系统相关的一些义务将开始适用。例如用于医疗设备、机械以及无线电设备的高风险人工智能系统。在2025年8月2日之前已投放市场的通用人工智能模型的提供者应采取必要步骤,以便在2027年8月2日之前遵守本条例规定的义务

●2028年11月之前

欧洲委员会应当就监管权力下放问题起草一份报告

●2030年12月31日之前

在2027年8月2日之前已经投放市场或投入使用的大型信息技术系统组成部分的人工智能系统应当符合本法规定 数字化转型网(www.szhzxw.cn)

二、法案适用范围

欧盟人工智能法案建立了全面的监管框架,其管辖范围延伸至在欧盟境内运营的公共和私营实体。该法案的范围显著广泛,反映了人工智能技术的普及及其对欧盟公民的潜在影响。受该法规约束的主要实体包括:

人工智能系统提供者:

开发旨在投放欧盟市场或在欧盟境内部署的人工智能系统的实体属于该法案的管辖范围。第3(2)条将提供者定义为”开发人工智能系统或委托开发人工智能系统,以便以自己的名义或商标将其投放市场或投入使用的自然人或法人、公共机构、机构或其他机构,无论是否收费”。

人工智能系统用户:

该法案适用于在欧盟境内使用人工智能系统的组织和个人。根据第3(4)条,用户被定义为”在其权限下使用人工智能系统的任何自然人或法人、公共机构、机构或其他机构,除非该人工智能系统是在个人非专业活动中使用”。 数字化转型网(www.szhzxw.cn)

进口商和分销商:

参与欧盟市场人工智能系统进口或分销的实体需承担该法案规定的特定义务。第26条和第27条分别详细说明了进口商和分销商确保遵守法规的责任。

第三国实体:

如第2(1)条所述,该法案的域外效力将其适用范围扩展到位于欧盟境外的人工智能系统提供者和用户,只要这些系统产生的输出在欧盟境内使用。这一规定确保影响欧盟公民的人工智能系统受到监管。

该法案的范围通过一系列豁免和具体应用条款进一步细化:

军事和国防:

第2(3)条明确排除了专门为军事目的开发或使用的人工智能系统。

研究与开发:

第2(5a)条为仅用于研究和开发目的的人工智能系统提供了某些豁免。

遗留系统:

第83(2)条概述了在法规适用日期之前投放市场或投入使用的人工智能系统的过渡性条款。

三、法案条款大纲

将于2025年8月1日开始生效的《欧盟人工智能法案》共13章113条,和13个附录。

| 法案条目原文 | 中文译文 |

| CHAPTER I GENERAL PROVISIONS | 第一章 一般规定 |

| Article 1 Subject matter` | 第一条 主体问题 |

| Article 2 Scope | 第二条 范围 |

| Article 3 Definitions | 第三条 定义 |

| Article 4 AI literacy | 第四条 人工智能素养 |

| CHAPTER II PROHIBITED AI PRACTICES | 第二章 禁止性人工智能活动 |

| Article 5 Prohibited AI practices | 第五条 禁止性人工智能活动 |

| CHAPTER III HIGH-RISK AI SYSTEMS | 第三章 高风险人工智能系统 |

| SECTION 1 Classification of AI systems as high-risk | 第一节 高风险人工智能系统的分类 |

| Article 6 Classification rules for high-risk AI systems | 第六条 高风险人工智能系统的分类规则 |

| Article 7 Amendments to Annex III | 第七条 附录三修订案 |

| SECTION 2 Requirements for high-risk AI systems | 第二节 高风险人工智能的要件 |

| Article 8 Compliance with the requirements | 第八条 合规要件 |

| Article 9 Risk management system | 第九条 风险管理系统 |

| Article 10 Data and data governance | 第十条 数据和数据治理 |

| Article 11 Technical documentation | 第十一条 技术文档 |

| Article 12 Record-keeping | 第十二条 记录保存 |

| Article 13 Transparency and provision of information to deployers | 第十三条 透明度和向部署方提供信息 |

| Article 14 Human oversight | 第十四条 人类监督 |

| Article 15 Accuracy, robustness and cybersecurity | 第十五条 准确性、稳健性和网络安全 |

| SECTION 3 Obligations of providers and deployers of high-risk AI systems and other parties | 第三节 高风险人工智能系统的提供方、部署方以及其他主体的义务 |

| Article 16 Obligations of providers of high-risk AI systems | 第十六条 高风险人工智能系统提供方的义务 |

| Article 17 Quality management system | 第十七条 质量管理系统 |

| Article 18 Documentation keeping | 第十八条 文档保存 |

| Article 19 Automatically generated logs | 第十九条 自动生成日志 |

| Article 20 Corrective actions and duty of information | 第二十条 纠错措施和信息义务 |

| Article 21 Cooperation with competent authorities | 第二十一条 与主管当局合作 |

| Article 22 Authorised representatives of providers of high-risk AI systems | 第二十二条 高风险人工智能系统提供方的授权代表 |

| Article 23 Obligations of importers | 第二十三条 进口方的义务 |

| Article 24 Obligations of distributors | 第二十四条 经销方的义务 |

| Article 25 Responsibilities along the AI value chain | 第二十五条 人工智能价值链上的责任 |

| Article 26 Obligations of deployers of high-risk AI systems | 第二十六条 高风险人工智能系统部署方的义务 |

| Article 27 Fundamental rights impact assessment for high-risk AI systems | 第二十七条 高风险人工智能系统的基本权利影响评估 |

| SECTION 4 Notifying authorities and notified bodies | 第四节 通知机构和被通知机构 |

| Article 28 Notifying authorities | 第二十八条 通知机构 |

| Article 29 Application of a conformity assessment body for notification | 第二十九条 通知的符合性评定机构申请 |

| Article 30 Notification procedure | 第三十条 通知程序 |

| Article 31 Requirements relating to notified bodies | 第三十一条 与通知机构有关的要求 |

| Article 32 Presumption of conformity with requirements relating to notified bodies | 第三十二条 符合通知机构相关要求的推定 |

| Article 33 Subsidiaries of notified bodies and subcontracting | 第三十三条 通知机构的下属机构和分包 |

| Article 34 Operational obligations of notified bodies | 第三十四条 通知机构的运行义务 |

| Article 35 Identification numbers and lists of notified bodies | 第三十五条 被通知机构的识别号和名单 |

| Article 36 Changes to notifications | 第三十六条 通知的变更 |

| Article 37 Challenge to the competence of notified bodies | 第三十七条 对通知机构权限的异议 |

| Article 38 Coordination of notified bodies | 第三十八条 通知机构间的协调 |

| Article 39 Conformity assessment bodies of third countries | 第三十九条 第三国的符合性评估机构 |

| SECTION 5 Standards, conformity assessment, certificates, registration | 第五节 标准、符合性评定、证书、登记 |

| Article 40 Harmonised standards and standardisation deliverables | 第四十条 统一标准和标准化成果 |

| Article 41 Common specifications | 第四十一条 通用规格 |

| Article 42 Presumption of conformity with certain requirements | 第四十二条 符合特定要求的推定 |

| Article 43 Conformity assessment | 第四十三条 符合性评定 |

| Article 44 Certificates | 第四十四条 证书 |

| Article 45 Information obligations of notified bodies | 第四十五条 通知机构的信息义务 |

| Article 46 Derogation from conformity assessment procedure | 第四十六条 符合性评定程序的例外 |

| Article 47 EU declaration of conformity | 第四十七条 欧盟关于符合性的声明 |

| Article 48 CE marking | 第四十八条 CE标志 |

| Article 49 Registration | 第四十九条 登记 |

| CHAPTER IV TRANSPARENCY OBLIGATIONS FOR PROVIDERS AND DEPLOYERS OF CERTAIN AI SYSTEMS | 第四章 特定人工智能系统的提供方和部署方的透明度义务 |

| Article 50 Transparency obligations for providers and deployers of certain AI systems | 第五十条 特定人工智能系统的提供方和部署方的透明度义务 |

| CHAPTER V GENERAL-PURPOSE AI MODELS | 第五章 通用人工智能模型 |

| SECTION 1 Classification rules | 第一节 分类规则 |

| Article 51 Classification of general-purpose AI models as general-purpose AI models with systemic risk | 第五十一条 作为具有系统性风险的通用人工智能模型的通用人工智能模型分类 |

| Article 52 Procedure | 第五十二条 程序 |

| SECTION 2 Obligations for providers of general-purpose AI models | 第二节 通用人工智能模型提供方的义务 |

| Article 53 Obligations for providers of general-purpose AI models | 第五十三条 通用人工智能模型提供方的义务 |

| Article 54 Authorised representatives of providers of general-purpose AI models | 第五十四条 通用人工智能模型提供方的授权代表 |

| SECTION 3 Obligations of providers of general-purpose AI models with systemic risk | 第三节 具有系统性风险的通用人工智能模型的提供方的义务 |

| Article 55 Obligations of providers of general-purpose AI models with systemic risk | 第五十五条 具有系统性风险的通用人工智能模型的提供方的义务 |

| SECTION 4 Codes of practice | 第四节 业务守则 |

| Article 56 Codes of practice | 第五十六条 业务守则 |

| CHAPTER VI MEASURES IN SUPPORT OF INNOVATION | 第六章创新支持措施 |

| Article 57 AI regulatory sandboxes | 第五十七条 人工智能监管沙盒 |

| Article 58 Detailed arrangements for, and functioning of, AI regulatory sandboxes | 第五十八条 人工智能监管沙盒的具体安排和运作方式 |

| Article 59 Further processing of personal data for developing certain AI systems in the public interest in the AI regulatory sandbox | 第五十九条 在人工智能监管沙盒中进一步处理个人数据用于开发符合公共利益的特定人工智能系统 |

| Article 60 Testing of high-risk AI systems in real world conditions outside AI regulatory sandboxes | 第六十条 在人工智能监管沙盒之外的真实环境中测试高风险人工智能系统 |

| Article 61 Informed consent to participate in testing in real world conditions outside AI regulatory sandboxes | 第六十一条 参与人工智能监管沙盒之外的真实环境测试的知情同意 |

| Article 62 Measures for providers and deployers, in particular SMEs, including start-ups | 第六十二条 针对提供方、部署方,特别是初创企业等中小企业的措施 |

| Article 63 Derogations for specific operators | 第六十三条 特定运营方的例外 |

| CHAPTER VII GOVERNANCE | 第七章 治理 |

| SECTION 1 Governance at Union level | 第一节 欧盟层面的治理 |

| Article 64 AI Office | 第六十四条 人工智能办公室 |

| Article 65 Establishment and structure of the European Artificial Intelligence Board | 第六十五条 欧洲人工智能委员会的设立和组织结构 |

| Article 66 Tasks of the Board | 第六十六条 欧洲人工智能委员会的任务 |

| Article 67 Advisory forum | 第六十七条 咨询论坛 |

| Article 68 Scientific panel of independent experts | 第六十八条 独立专家科学小组 |

| Article 69 Access to the pool of experts by the Member States | 第六十九条 欧盟成员国获取专家库支持 |

| SECTION 2 National competent authorities | 第二节 国家主管机关 |

| Article 70 Designation of national competent authorities and single points of contact | 第七十条 指定国家主管机关和单一联络点 |

| CHAPTER VIII EU DATABASE FOR HIGH-RISK AI SYSTEMS | 第八章 欧盟高风险人工智能系统数据库 |

| Article 71 EU database for high-risk AI systems listed in Annex III | 第七十一条 欧盟高风险人工智能系统数据库见附录三 |

| CHAPTER IX POST-MARKET MONITORING, INFORMATION SHARING AND MARKET SURVEILLANCE | 第九章 上市后监测、信息共享和市场监督 |

| SECTION 1Post-market monitoring | 第一节 上市后监测 |

| Article 72 Post-market monitoring by providers and post-market monitoring plan for high-risk AI systems | 第七十二条 提供方的上市后监测和高风险人工智能系统上市后监测计划 |

| SECTION 2 Sharing of information on serious incidents | 第二节 重大事件信息共享 |

| Article 73 Reporting of serious incidents | 第七十三条 重大事件的报告 |

| SECTION 3 Enforcement | 第三节 实施 |

| Article 74 Market surveillance and control of AI systems in the Union market | 第七十四条 欧盟市场中的人工智能系统的市场监督和控制 |

| Article 75 Mutual assistance, market surveillance and control of general-purpose AI systems | 第七十五条 通用人工智能系统的互助、市场监督和控制 |

| Article 76 Supervision of testing in real world conditions by market surveillance authorities | 第七十六条 市场监管机构对真实环境中测试的监督 |

| Article 77 Powers of authorities protecting fundamental rights | 第七十七条 各机构保护基本权利的权力 |

| Article 78 Confidentiality | 第七十八条 保密 |

| Article 79 Procedure at national level for dealing with AI systems presenting a risk | 第七十九条 国家层面对发生风险的人工智能系统的处理程序 |

| Article 80 Procedure for dealing with AI systems classified by the provider as non-high-risk in application of Annex III | 第八十条 适用附录三处理被提供方归类为非高风险人工智能系统的程序 |

| Article 81 Union safeguard procedure | 第八十一条 欧盟的保护程序 |

| Article 82 Compliant AI systems which present a risk | 第八十二条 发生风险的合规人工智能系统 |

| Article 83 Formal non-compliance | 第八十三条 违规的处理措施 |

| Article 84 Union AI testing support structures | 第八十四条 欧盟的人工智能测试支持体系 |

| SECTION 4 Remedies | 第四节 救济 |

| Article 85 Right to lodge a complaint with a market surveillance authority | 第八十五条 向市场监管机构申诉的权利 |

| Article 86 Right to explanation of individual decision-making | 第八十六条 个人决策的解释权 |

| Article 87 Reporting of infringements and protection of reporting persons | 第八十七条 侵权行为举报和举报人保护 |

| SECTION 5 Supervision, investigation, enforcement and monitoring in respect of providers of general-purpose AI models | 第五节 对通用人工智能模型提供方的监督、调查、执行和监测 |

| Article 88 Enforcement of the obligations of providers of general-purpose AI models | 第八十八条 通用人工智能模型提供方的义务的履行 |

| Article 89 Monitoring actions | 第八十九条 活动监测 |

| Article 90 Alerts of systemic risks by the scientific panel | 第九十条 科学小组对系统性风险的提示 |

| Article 91 Power to request documentation and information | 第九十一条 要求提供文档和信息的权力 |

| Article 92 Power to conduct evaluations | 第九十二条 实施评估的权力 |

| Article 93 Power to request measures | 第九十三条 要求采取措施的权力 |

| Article 94 Procedural rights of economic operators of the general-purpose AI model | 第九十四条 通用人工智能模型的经济运营者的程序性权利 |

| CHAPTER X CODES OF CONDUCT AND GUIDELINES | 第十章业务守则和指南 |

| Article 95 Codes of conduct for voluntary application of specific requirements | 第九十五条 自愿适用特定要求的业务守则 |

| Article 96 Guidelines from the Commission on the implementation of this Regulation | 第九十六条 欧盟委员会关于适用本法案的指南 |

| CHAPTER XI DELEGATION OF POWER AND COMMITTEE PROCEDURE | 第十一章 行政授权和欧盟委员会程序 |

| Article 97 Exercise of the delegation | 第九十七条 授权的行使 |

| Article 98 Committee procedure | 第九十八条 欧盟委员会程序 |

| CHAPTER XII PENALTIES | 第十二章罚则 |

| Article 99 Penalties | 第九十九条 罚则 |

| Article 100 Administrative fines on Union institutions, bodies, offices and agencies | 第一百条 对欧盟各组织机构的行政罚款 |

| Article 101 Fines for providers of general-purpose AI models | 第一百零一条 对通用人工智能模型提供方的罚款 |

| CHAPTER XIII FINAL PROVISIONS | 第十三章 最后条款 |

| Article 102 Amendment to Regulation (EC) No 300/2008 | 第一百零二条 本法对欧洲议会和欧盟理事会第300/2008号《关于民用航空安全领域的共同规则条例》的修订 |

| Article 103 Amendment to Regulation (EU) No 167/2013 | 第一百零三条 本法对欧洲议会和欧盟理事会第167/2013号《关于农业和林业车辆的批准和市场监督条例》的修订 |

| Article 104 Amendment to Regulation (EU) No 168/2013 | 第一百零四条 本法对欧洲议会和欧盟理事会第168/2013号《关于两轮或三轮车辆和四轮摩托车的批准和市场监督条例》的修订 |

| Article 105 Amendment to Directive 2014/90/EU | 第一百零五条 本法对欧洲议会和欧盟理事会欧洲议会和理事会第2014/90/EU号《关于海洋设备的指令》的修订 |

| Article 106 Amendment to Directive (EU) 2016/797 | 第一百零六条 本法对欧洲议会和欧盟理事会第(EU)2016/797号《关于欧盟内部铁路系统互操作性的指令》的修订 |

| Article 107 Amendment to Regulation (EU) 2018/858 | 第一百零七条 本法对欧洲议会和欧盟理事会第2018/858号《关于机动车辆及其拖车以及用于此类车辆的系统、部件和独立技术套件的批准和市场监督条例》的修订 |

| Article 108 Amendments to Regulation (EU) 2018/1139 | 第一百零八条 本法对欧洲议会和欧盟理事会第2018/1139号《关于民用航空领域共同规则和建立欧盟航空安全局条例》的修订 |

| Article 109 Amendment to Regulation (EU) 2019/2144 | 第一百零九条 本法对欧洲议会和欧盟理事会第2019/2144号《关于机动车辆及其拖车以及用于此类车辆的系统、部件和独立技术套件的型式批准要求条例》的修订 |

| Article 110 Amendment to Directive (EU) 2020/1828 | 第一百一十条 本法对欧洲议会和欧盟理事会第2020/1828号《关于保护消费者集体利益的代表性行动的指令》的修订 |

| Article 111 AI systems already placed on the market or put into service and general-purpose AI models already placed on the marked | 第一百一十一条 已投放到市场或投入使用的人工智能系统和已投放到市场的通用人工智能模型 |

| Article 112 Evaluation and review | 第一百一十二条 评估和审查 |

| Article 113 Entry into force and application | 第一百一十三条 生效和适用 |

| ANNEX I List of Union harmonisation legislation | 附录一 联盟统一立法清单 |

| ANNEX II List of criminal offences referred to in Article 5(1), first subparagraph, point (h)(iii) | 附录二 第五条第1款第(h)(iii)项所规定的刑事犯罪清单 |

| ANNEX III High-risk AI systems referred to in Article 6(2) | 附录三 第六条第2款规定的高风险人工智能系统 |

| ANNEX IV Technical documentation referred to in Article 11(1) | 附录四 第十一条第1款规定的技术文档 |

| ANNEX V EU declaration of conformity | 附录五 欧盟符合性声明 |

| ANNEX VI Conformity assessment procedure based on internal control | 附录六 基于内部控制的符合性评定程序 |

| ANNEX VII Conformity based on an assessment of the quality management system and an assessment of the technical documentation | 附录七 基于质量管理体系评估和技术文档评估的符合性 |

| ANNEX VIII Information to be submitted upon the registration of high-risk AI systems in accordance with Article 49 | 附录八 根据第四十九条规定登记高风险人工智能系统时应提交的信息 |

| ANNEX IX Information to be submitted upon the registration of high-risk AI systems listed in Annex III in relation to testing in real world conditions in accordance with Article 60 | 附录九 附录三所列高风险人工智能系统登记后根据第六十条规定在真实环境中进行测试时应提交的信息 |

| ANNEX X Union legislative acts on large-scale IT systems in the area of Freedom, Security and Justice | 附录十 关于大规模信息技术系统在自由、安全和公平领域的欧盟立法活动 |

| ANNEX XI Technical documentation referred to in Article 53(1), point (a) — technical documentation for providers of general-purpose AI models | 附录十一 第五十三条第1款第(a)项规定的技术文档——通用人工智能模型提供者的技术文档 |

| ANNEX XII Transparency information referred to in Article 53(1), point (b) — technical documentation for providers of general-purpose AI models to downstream providers that integrate the model into their AI system | 附录十二 第五十三条第1款第(b)项规定的透明度信息——为通用人工智能模型提供者提供给其下游要将该模型集成到其人工智能系统中的下游提供者的技术文档 |

| ANNEX XIII Criteria for the designation of general-purpose AI models with systemic risk referred to in Article 51 | 附录十三 第五十一条规定的具有系统性风险的通用人工智能模型的指定标准 |

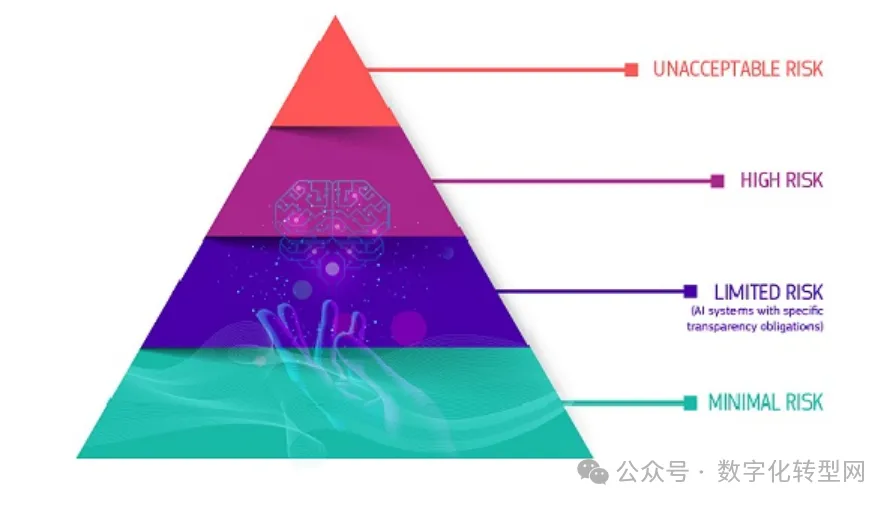

四、基于风险的方法

欧盟人工智能法案采用基于风险的划分方法,根据人工智能系统对个人权利和安全的潜在影响将其分为四个层级。 数字化转型网(www.szhzxw.cn)

(1)不可接受风险(Unacceptable Risk)。

定义为对安全、生计和基本权利构成明显威胁的人工智能系统,根据法案第5条禁止。

例如,社会评分系统、利用特定个人或者人群脆弱性的系统、使用超越人意识的潜意识系统、在公共空间使用实时远程生物识别系统、针对个人的预测性警务系统、工作场所和教育机构中的情绪识别系统。

(2)高风险(High Risk)。

定义为可能危害安全、基本权利或导致重大不利影响的人工智能系统,受法案第6-51条规定的严格合规和透明度义务约束。

例如,用于关键基础设施、教育、就业、基本服务、执法、移民管理和司法管理的人工智能等。判定高风险需要依据人工智能系统的功能、预期目的和行为方式。此类高风险人工智能系统的供应商必须运行合格评定程序,然后才能在欧盟销售和使用其产品。

(3)有限风险(Limited Risk)。

定义为需要特定透明度措施的人工智能系统。

例如,聊天机器人,情绪识别系统等。法案第52-54条概述的透明度义务。此类系统受信息和透明度要求的约束,生成或操纵图像、音频或视频内容(深度伪造)的人工智能系统的部署者必须披露该内容是人为生成或操纵的,特定情况除外(如被用于防止刑事犯罪时)。生成大量合成内容的人工智能系统提供商必须实施足够可靠、可互操作、有效和强大的技术和方法(如水印),以便能够标记和检测输出是由人工智能系统而不是人类生成或操纵的。 数字化转型网(www.szhzxw.cn)

(4)最小风险(Minimal Risk)。

定义为对公民权利或安全构成最小或无风险的人工智能应用。例如,人工智能支持的视频游戏,垃圾邮件过滤器等。除现有法律外,无额外法律要求。根据欧盟委员会的说法,目前在欧盟使用或可能在欧盟使用的绝大多数人工智能系统都属于低风险系统。

五、法案主要条款

该法案引入了几项利益相关者必须遵守的关键条款,包括:

基于风险的分类:

人工智能系统根据其风险程度进行分类,对高风险人工智能系统有特定要求,包括透明度、数据治理、文档记录和人类监督。

禁止行为:

某些人工智能实践被视为不可接受并予以禁止,例如那些操纵人类行为以规避用户自由意志的系统,或允许政府进行”社会评分”的系统。 数字化转型网(www.szhzxw.cn)

透明度义务:

与人类互动或用于检测情绪或基于生物特征数据确定社会类别的人工智能系统必须设计得透明。

数据治理:

高风险人工智能系统必须在高质量数据集上进行训练、验证和测试,这些数据集应相关、具有代表性、无偏见、并尊重隐私。

市场监督:

成员国必须指定国家主管机构进行市场监督,以确保法案的执行。

六、法案重要定义

人工智能系统:

是一种基于机器的系统,设计为以不同程度的自主性运行,在部署后可能表现出适应性,并且为了明确或隐含的目标,从其接收的输入中推断如何生成可影响物理或虚拟环境的输出,如预测、内容、建议或决定。 数字化转型网(www.szhzxw.cn)

广泛侵权:

是指违反保护个人利益的联盟法律的任何作为或不作为。

风险:

是指发生危害的可能性和危害的严重性的组合。

投放市场:

是指在欧盟市场上首次提供人工智能系统或通用人工智能模型。

七、禁止的人工智能实践

采用超出个人意识的潜意识技术或有目的的操纵或欺骗技术。

其目的或效果是通过明显损害一个人或一群人做出知情决定的能力,实质性地扭曲该人或一群人的行为,从而导致该人做出其本来不会做出的决定,造成或可能造成对该人、另一人或一群人的重大伤害;

利用特定个人或特定群体因其年龄、残疾或特定社会或经济状况而具有的任何弱点

以实质性扭曲该人或属于该群体的人的行为,造成或有合理可能造成该人或他人重大伤害为目的或效果。

八、对通用人工智能模型提供者的罚款

委员会可对通用人工智能模型提供者处以不超过其上一财政年度全球总营业额3%或15000000欧元的罚款,以金额较高者为准。 数字化转型网(www.szhzxw.cn)

九、各国相继携手出台政策

在达成协议之前,欧盟各成员国和欧洲议会议员已就应如何管控人工智能进行了多年的讨论。2021年,欧盟委员会就曾提议通过《人工智能法案》。在去年ChatGPT发布后,监管人工智能变得紧迫起来,包括中国、美国、英国在内的国家,都在快速地推进关于人工智能治理的规则建设。

10月30日,美国就人工智能出台了“关于安全、可靠和可信地开发和使用人工智能”的行政命令,提出AI安全新标准。该行政命令提出依据八项指导原则和优先事项推进人工智能的开发和使用,包括:为人工智能制定新的安全标准;保护美国人隐私;促进公平和公民权利;维护消费者、病人及学生权益;支持工人;促进创新和竞争;提升美国在海外的领导力;确保政府负责任且有效地使用人工智能。

有法律专家认为,一方面“行政命令”是强化对于产业链、供应链的管控话语权;另一方面,通过信息披露、情报共享,实现安全和风险的溯源管制。

今年7月,中国国家网信办等七部门联合发布《生成式人工智能服务管理暂行办法》,自8月15日起施行。10月18日,中央网信办又发布《全球人工智能治理倡议》。该倡议从发展、安全和治理三个维度出发,提出了11项倡议。其中提到,支持以人工智能技术防范人工智能风险,人工智能需辩证看待,它可能会产生“深远的影响”,同时也带来“不可预测的风险和复杂的挑战”。

值得注意的是,在11月中美元首旧金山会晤后双方达成的二十多项共识里,有一项专门关于人工智能作出阐述——中美双方同意建立人工智能政府间对话机制。

同月,在首届全球人工智能(AI)安全峰会上,中国和其他 27 个国家和欧盟也签署了一项关于人工智能的重要协议,即《布莱切利宣言》,该协议促进“对前沿人工智能带来的机遇和风险的共同理解,以及各国政府共同努力应对最重大挑战的必要性”。 数字化转型网(www.szhzxw.cn)

欧盟《人工智能法》英文原版、中文译版下载

数字化转型网人工智能专题

与全球关注人工智能的顶尖精英一起学习!数字化转型网建立了一个专门讨论人工智能技术、产业、学术的研究学习社区,与各位研习社同学一起成长!欢迎扫码加入!

翻译:

The EU’s Artificial Intelligence Law was officially released, ushering in a new chapter in AI regulation

Digital Transformation Network Artificial Intelligence topics

Learn with the world’s top AI professionals! Digital Transformation Network has established a research and learning community dedicated to discussing artificial intelligence technology, industry, and academia, and grow together with you! Welcome to scan code to join!

On July 12, 2024, the Official Journal of the European Union officially published the Artificial Intelligence Act (AI Act). This landmark legislation contains 180 preambles, 113 articles and 13 annexes, establishing a comprehensive framework for the development, deployment and use of AI systems in the EU. The law will officially take effect on August 1, local time, and August 2, Beijing time, 20 days after its announcement, and a phased and risk-based approach has been adopted in legislation.

1. It will be implemented in phases

● Before November 2, 2024

Each member State designates an AI regulator

● From 2 February 2025

The bill’s general provisions and prohibitions will come into effect, and a list of prohibitions against artificial intelligence that poses an “unacceptable risk,” such as social credit ranking systems, will be applied within six months of the law’s enactment

● Before May 2, 2025

The EU Office for Artificial Intelligence will issue a code of conduct

● From August 2, 2025

The General Artificial Intelligence Model (GPAI) regulations come into effect, and providers will be required to meet obligations such as transparency

● Before February 2, 2026

The Commission needs to propose a template for a post-market monitoring plan and a list of elements to be included in the plan

● Before August 2, 2026

The act will come into full effect, meaning everything else in the act will apply. Includes all obligations for high-risk AI systems as defined in Annex III (List of High-risk AI Systems). The infrastructure related to governance and compliance assessment systems should be operational, and Member States should establish at least one AI regulatory sandbox at the national level, which should be operational by 2 August 2026 at the latest 数字化转型网(www.szhzxw.cn)

● Before August 2, 2027

Some obligations related to high-risk AI systems will begin to apply. Examples include high-risk AI systems for medical devices, machinery, and radio equipment. Providers of general-purpose AI models placed on the market before 2 August 2025 shall take the necessary steps to comply with their obligations under this Regulation by 2 August 2027

● By November 2028

The European Commission should draw up a report on the devolution of regulatory powers

● Before December 31, 2030

Artificial intelligence systems that are part of large-scale information technology systems that have been placed on the market or put into use before August 2, 2027 shall comply with the provisions of this Law.

2. Scope of application of the Act

The EU Artificial Intelligence Act establishes a comprehensive regulatory framework with jurisdiction extending to public and private entities operating within the EU. The scope of the bill is remarkably broad, reflecting the proliferation of AI technology and its potential impact on EU citizens. The main entities subject to this regulation include:

Ai System Provider:

Entities developing AI systems designed to be placed on the EU market or deployed within the EU fall within the scope of the Act. Article 3(2) defines a provider as “a natural or legal person, public body, institution or other body that develops or commissions the development of an AI system in order to place it on the market or put it into use under its own name or trademark, whether or not for a fee”.

Artificial Intelligence system users: 数字化转型网(www.szhzxw.cn)

The Act applies to organisations and individuals using AI systems within the EU. According to Article 3(4), a user is defined as “any natural or legal person, public authority, institution or other body that uses an AI system under its authority, unless the AI system is used for personal non-professional activities”.

Importers and Distributors:

Entities involved in the import or distribution of AI systems on the EU market are subject to specific obligations under the Act. Articles 26 and 27 detail, respectively, the responsibility of importers and distributors to ensure compliance with the regulations.

Third country entities:

As stated in Article 2(1), the extraterritorial effect of the Act extends its application to providers and users of AI systems located outside the EU, as long as the output produced by these systems is used within the EU. This regulation ensures that AI systems affecting EU citizens are regulated.

The scope of the Act is further refined through a series of exemptions and specific application provisions:

Military and Defense:

Article 2(3) specifically excludes artificial intelligence systems developed or used exclusively for military purposes. 数字化转型网(www.szhzxw.cn)

Research and Development:

Section 2(5a) provides certain exemptions for AI systems used solely for research and development purposes.

Legacy systems:

Article 83(2) Outlines transitional provisions for AI systems placed on the market or put into service before the date of application of the regulation.

3. Outline of the provisions of the Bill

The EU Artificial Intelligence Act, which will come into force on August 1, 2025, has 13 chapters, 113 articles and 13 appendices.

4. Risk-based approach

The EU AI Act adopts a risk-based approach, dividing AI systems into four tiers based on their potential impact on individual rights and security. 数字化转型网(www.szhzxw.cn)

(1) Unacceptable Risk.

Artificial intelligence systems, defined as posing a clear threat to safety, livelihood and fundamental rights, are prohibited under Section 5 of the Act.

Examples include social scoring systems, systems that exploit the vulnerability of specific individuals or groups of people, systems that use the subconscious beyond human consciousness, real-time remote biometrics systems in public Spaces, predictive policing systems for individuals, and emotional recognition systems in the workplace and educational institutions.

(2) High Risk.

Defined as an AI system that is likely to endanger safety, fundamental rights or cause significant adverse effects, subject to strict compliance and transparency obligations under Articles 6-51 of the Act.

Examples include artificial intelligence for critical infrastructure, education, employment, essential services, law enforcement, immigration management, and justice administration. Determining high risk needs to be based on the function, intended purpose, and behavior of the AI system. Suppliers of such high-risk AI systems must run conformity assessment procedures before they can sell and use their products in the EU. 数字化转型网(www.szhzxw.cn)

(3) Limited Risk.

Defined as an artificial intelligence system that requires specific transparency measures.

For example, chatbots, emotion recognition systems, etc. The transparency obligations outlined in sections 52-54 of the Act. Such systems are subject to information and transparency requirements, and the deployer of an AI system that generates or manipulates image, audio or video content (deepfakes) must disclose that the content has been artificially generated or manipulated, except in certain circumstances (such as when it is used to prevent criminal offences). Providers of AI systems that generate large amounts of synthetic content must implement techniques and methods (such as watermarking) that are reliable, interoperable, efficient, and robust enough to be able to flag and detect that the output is generated or manipulated by AI systems rather than humans.

(4) Minimal Risk. 数字化转型网(www.szhzxw.cn)

It is defined as an application of AI that poses minimal or no risk to the rights or safety of citizens. For example, AI-enabled video games, spam filters, etc. There are no additional legal requirements in addition to existing laws. According to the European Commission, the vast majority of AI systems currently in use or likely to be used in the EU are low-risk systems.

5. Main provisions of the Act

The bill introduces several key provisions that stakeholders must comply with, including:

Risk-based classification:

Ai systems are classified according to their level of risk, with specific requirements for high-risk AI systems, including transparency, data governance, documentation, and human oversight.

Prohibited acts:

Certain AI practices are deemed unacceptable and banned, such as those that manipulate human behavior to circumvent users’ free will, or that allow governments to conduct “social scoring.”

Transparency obligations: 数字化转型网(www.szhzxw.cn)

Ai systems that interact with humans or are used to detect emotions or determine social categories based on biometric data must be designed to be transparent.

Data Governance:

High-risk AI systems must be trained, validated, and tested on high-quality data sets that are relevant, representative, unbiased, and respectful of privacy.

Market supervision:

Member States must designate national authorities to conduct market surveillance in order to ensure the enforcement of the Act.

6. Important definitions of the Bill

Artificial intelligence system:

Is a machine-based system designed to operate with varying degrees of autonomy, may exhibit adaptability after deployment, and inferences from the inputs it receives how to generate outputs that can affect a physical or virtual environment, such as predictions, content, recommendations, or decisions, for explicit or implicit goals.

Widespread infringement:

Any act or omission that violates the laws of the Union protecting the interests of individuals.

Risks: 数字化转型网(www.szhzxw.cn)

Refers to the combination of the likelihood of a hazard occurring and the severity of the hazard.

Put on the market:

It refers to the first AI system or general AI model available on the EU market.

7. Prohibited artificial intelligence practices

The use of subconscious techniques or purposeful manipulation or deception techniques beyond the individual’s conscious awareness.

The purpose or effect is to materially distort the conduct of a person or group of persons by manifesting impairment of the ability of that person or group of persons to make informed decisions, thereby causing or potentially causing significant harm to that person, another person or group of persons by causing that person or group of persons to make decisions he or she would not otherwise make;

Exploit any vulnerability of a particular individual or group due to their age, disability or particular social or economic situation 数字化转型网(www.szhzxw.cn)

To have the purpose or effect of materially distorting the conduct of that person or a person belonging to that group to cause or be reasonably likely to cause significant harm to that person or others.

8. Fines for general AI model providers

The Commission may impose a fine on providers of general AI models not exceeding 3% of their total global turnover for the previous financial year or EUR 15 million, whichever is higher.

9. Countries have joined hands to introduce policies

The agreement follows years of discussions among EU member states and MEPs on how AI should be regulated. In 2021, the European Commission proposed the adoption of the Artificial Intelligence Act. After the release of ChatGPT last year, the regulation of AI became urgent, and countries including China, the United States, and the United Kingdom are rapidly advancing the construction of rules on AI governance. 数字化转型网(www.szhzxw.cn)

On October 30, the United States issued an executive order “on the Safe, Reliable and Trusted Development and Use of Artificial Intelligence” on artificial intelligence, proposing new standards for AI security. The executive order lays out eight guiding principles and priorities to advance the development and use of AI, including: Setting new safety standards for AI; Protecting Americans’ privacy; Promoting equity and civil rights; Safeguarding the rights and interests of consumers, patients and students; Support workers; Promote innovation and competition; Advancing American leadership abroad; Ensure that governments use AI responsibly and effectively.

Some legal experts believe that on the one hand, “administrative order” is to strengthen the right to control the industrial chain and supply chain; On the other hand, through information disclosure, intelligence sharing, security and risk traceability control.

In July this year, the National Cyberspace Administration of China and seven other departments jointly issued the Interim Measures for the Management of Generative Artificial Intelligence Services, which took effect on August 15. On October 18, the CAC released the Global Artificial Intelligence Governance Initiative. The initiative has put forward 11 initiatives from the three dimensions of development, security and governance. It mentioned that support for artificial intelligence technology to prevent artificial intelligence risks, artificial intelligence needs to be viewed dialectically, it may have a “far-reaching impact”, but also bring “unpredictable risks and complex challenges.”

It is worth noting that among the more than 20 consensus reached by the two sides after the meeting between the heads of state of China and the United States in San Francisco in November, one is specifically about artificial intelligence – China and the United States agreed to establish an intergovernmental dialogue mechanism on artificial intelligence.

In the same month, at the first Global Artificial Intelligence (AI) Security Summit, China and 27 other countries and the European Union also signed a major agreement on AI, the Bletchley Declaration, which promotes “a shared understanding of the opportunities and risks presented by cutting-edge AI, and the need for governments to work together to address the most significant challenges.”

European Union Artificial Intelligence Law, English original, Chinese translation download

Digital Transformation Network Artificial Intelligence topics

Learn with the world’s top AI professionals! Digital Transformation Network has established a research and learning community dedicated to discussing artificial intelligence technology, industry, and academia, and grow together with you! Welcome to scan code to join!

本文由数字化转型网(www.szhzxw.cn)转载而成,来源于数字化转型网公众号;编辑/翻译:数字化转型网宁檬树。